Abstract

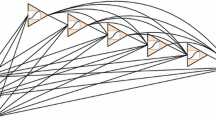

A new algorithm that mapsn-dimensional binary vectors intom-dimensional binary vectors using 3-layered feedforward neural networks is described. The algorithm is based on a representation of the mapping in terms of the corners of then-dimensional signal cube. The weights to the hidden layer are found by a corner classification algorithm and the weights to the output layer are all equal to 1. Two corner classification algorithms are described. The first one is based on the perceptron algorithm and it performs generalization. The computing power of this algorithm may be gauged from the example that the exclusive-Or problem that requires several thousand iterative steps using the backpropagation algorithm was solved in 8 steps. Another corner classification algorithm presented in this paper does not require any computations to find the weights. However, in its basic form it does not perform generalization.

Similar content being viewed by others

References

D E Rumelhartet al Parallel distributed processing, (The MIT Press, Cambridge, 1986) Vol. 1

M L Minsky and S A Papert,Perceptrons, (The MIT Press, Cambridge, 1988)

S C Kak,Pramana - J. Phys.,38, 271 (1992)

Rosenblatt, F,Principles of neurodynamics, (Spartan, New York, 1962)

S A Papert, Some mathematical models of learning.Proceedings of the fourth London symposium on information theory (1961)

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Kak, S. On training feedforward neural networks. Pramana - J Phys 40, 35–42 (1993). https://doi.org/10.1007/BF02898040

Received:

Revised:

Issue Date:

DOI: https://doi.org/10.1007/BF02898040