Abstract

The aim of this study was to investigate how reciprocal peer assessment in modeling-based learning can serve as a learning tool for secondary school learners in a physics course. The participants were 22 upper secondary school students from a gymnasium in Switzerland. They were asked to model additive and subtractive color mixing in groups of two, after having completed hands-on experiments in the laboratory. Then, they submitted their models and anonymously assessed the model of another peer group. The students were given a four-point rating scale with pre-specified assessment criteria, while enacting the peer-assessor role. After implementation of the peer assessment, students, as peer assessees, were allowed to revise their models. They were also asked to complete a short questionnaire, reflecting on their revisions. Data were collected by (i) peer-feedback reports, (ii) students’ initial and revised models, (iii) post-instructional interviews with students, and (iv) students’ responses to open-ended questions. The data were analyzed qualitatively and then quantitatively. The results revealed that, after enactment of the peer assessment, students’ revisions of their models reflected a higher level of attainment toward their model-construction practices and a better conceptual understanding of additive and subtractive color mixing. The findings of this study suggest that reciprocal peer assessment, in which students experience both the role of assessor and assessee, facilitates students’ learning in science. Based on our findings, further research directions are suggested with respect to novel approaches to peer assessment for developing students’ modeling competence in science learning.

Similar content being viewed by others

Introduction

Recent developments in the field of assessment stress the importance of formative approaches, in which assessment is realized as part of the learning process to support the improvement of learning outcomes (Bell and Cowie 2001). Formative assessment has also received emphasis as a mechanism for scaffolding learning in science. Peer assessment is a formative assessment method that actively engages students in the assessment process (Kollar and Fischer 2010; Looney 2011). Topping (1998) defined peer assessment as any educational arrangement where students judge their peers’ performance by providing grades and / or offering written or oral feedback comments. Peer assessment occurs more productively among individuals who have experienced a common learning context (Topping 1998) and who subsequently have similar backgrounds with respect to content knowledge (Falchikov and Goldfinch 2000). Previous studies have focused on the impact that peer assessment might entail on students’ learning. In particular, when employed formatively, peer assessment can improve students’ learning accomplishments (e.g., Falchikov 2003) and their overall performance (e.g., specific skills and practices) (Black and Harrison 2001; Sluijsmans et al. 2002) in various domains including science education (Chen et al. 2009; Crane and Winterbottom 2008; Tseng and Tsai 2007; Tsivitanidou and Constantinou 2016a, 2016b; Tsivitanidou et al. 2011). Moreover, the enactment of peer assessment may foster the development of certain skills and competences, such as students’ skills in investigative fieldwork (El-Mowafy 2014) and also their reflection and in-depth thinking (e.g., Chang et al. 2011; Cheng et al. 2015; Jaillet 2009). Reflection processes can be enhanced in the context of peer assessment, especially reciprocal peer assessment, in which students can benefit from the enactment of the role of both the assessor and the assessee (Topping 2003; Tsivitanidou et al. 2011). Learning gains can emerge when students receive feedback from their peers (Hanrahan and Isaacs 2001; Lindsay and Clarke 2001; Tsivitanidou et al. 2011), but also when they provide feedback to their peers (Lin et al. 2001), because they might be introduced to alternative examples and approaches (Gielen et al. 2010) and can also attain significant cognitive progression (Chang et al. 2011; Hwang et al. 2014). This renders peer assessment not only an innovative assessment method (Cestone et al. 2008; Harrison and Harlen 2006) but also a learning tool (Orsmond et al. 1996), in the sense of co-construction of knowledge, that is, constructing new knowledge by the exchange of pre-conceptions, questions, and hypotheses (Labudde 2000). Despite those benefits, according to Crane and Winterbottom (2008), few studies have focused on peer assessment in science; previous research in this area is scarce especially at the primary, that is, grades 1 to 6 (Harlen 2007), and secondary school levels, that is, grades 7 to 12 (Tsivitanidou et al. 2011). Also, few studies have focused on peer assessment in modeling-based learning (Chang and Chang 2013). As a result, very little is known about what students are able to do in modeling-based learning, especially in terms of whether the experience of peer assessment could be useful for them and their peers with respect to the enhancement of their learning.

Theoretical background

Reciprocal peer assessment and peer feedback

Peer assessment can be implemented in various ways. Based on the roles that students enact while implementing peer assessment, the method can be characterized as one-way or two-way / reciprocal / mutual (Hovardas et al. 2014). Reciprocal peer assessment is the type of formative assessment in which students are given the opportunity to assess each other’s work, thus enacting both roles of the assessor and the assessee, whereas in one-way peer assessment, students enact either the role of the assessor or that of the assessee. While enacting the peer-assessor role, students are required to assess peer work and to provide peer feedback for guiding their peers in improving their work (Topping 2003). In the peer-assessee role, students receive peer feedback which can lead to revisions in their work and ultimately enhance their future learning accomplishments (Tsivitanidou and Constantinou 2016a, b).

When learners are engaged in both roles of the assessor and the assessee, in the context of reciprocal peer assessment, certain assessment skills are required (Gielen and de Wever 2015). When enacting the peer-assessor role, students need to be able to assess their peers’ work with particular assessment criteria (Sluijsmans 2002), judge the performance of a peer, and eventually provide peer feedback. Apart from assessment skills (Sluijsmans 2002), peer assessment also requires a shared understanding of the learning objectives and content knowledge among students in order to review, clarify, and correct peers’ work (Ballantyne et al. 2002). Compared to this, in the role of the peer assessee, students traditionally need to “critically review the peer feedback they have received, decide which changes are necessary in order to improve their work, and proceed with making those changes” (for detailed description, see Hovardas et al. 2014, p. 135). In both cases, reciprocal peer assessment engages students in cognitive activities such as summarizing, explaining, providing feedback, and identifying mistakes and gaps, which are dissimilar from the expected performance (Van Lehn et al. 1995).

The provision of peer feedback is also intended to involve students in learning by providing to and receiving from their peers opinions, ideas, and suggestions for improvement (Black and William 1998; Hovardas et al. 2014; Kim 2005). Related to this, previous research has already indicated that further research on the impact of peer feedback on students’ learning and performance is needed (e.g., Evans 2013; Hattie and Timperley 2007; Tsivitanidou and Constantinou 2016a, b) This is important, since earlier research has already revealed that providing feedback may be more beneficial for the assessor’s future performance than that of assessees who simply receive feedback (Cho and Cho 2011; Hwang et al. 2014; Kim 2005; Nicol et al. 2014), since giving feedback is related mainly to critical thinking whereas receiving feedback is related mainly to addressing subject content that needs clarification or other improvement (Hwang et al. 2014; Li, Liu, and Steckelberg 2010; Nicol et al. 2014; Walker 2015). In this vein, to have self-reflection processes and in-depth thinking while providing peer feedback may be more beneficial for assessors (Hwang et al. 2014), with respect to their learning, as compared to learning gains of assessees who receive peer feedback (Tsivitanidou and Constantinou 2016a).

The modeling competence in science learning

Science learning involves, among other processes, the translation of ideas, actions, and measurements into models, and the refinement of models through model critiquing and communication (Latour and Woolgar 1979; Lynch 1990 as cited in Chang and Chang 2013). Modeling is the process of constructing and deploying scientific models, and has received great attention as a competence as well, since it facilitates students’ learning in science (Hodson 1993). Educational research indicates that the development of modeling competence enhances students’ learning of science, about science, and how to do science (Saari and Viiri 2003; Schwarz and White 2005). The modeling competence can be fostered in the context of modeling-based learning (Nicolaou 2010; Papaevripidou 2012; Papaevripidou et al. 2014) which refers to “learning through construction and refinement of scientific models by students” (Nicolaou and Constantinou 2014, p. 55). The results obtained in previous studies show that emphasizing modeling in the learning environment not only increases students’ modeling skills (Kaberman and Dori 2009) but also leads to learning gains with respect to inquiry skills and conceptual understanding (Kyza et al. 2011). Papaevripidou et al. (2014) proposed the modeling competence framework (MCF) which suggests the breakdown of modeling competence into two categories: modeling practices and meta-knowledge about modeling and models (Nicolaou and Constantinou 2014; Papaevripidou et al. 2014). It emerged from a synthesis of the research literature on learning and teaching science through modeling (Papaevripidou et al. 2014). Within this framework, it is suggested that learners’ modeling competence emerges as a result of their participation within specific modeling practices, and is shaped by meta-knowledge about models and modeling (Schwarz et al. 2009).

In this study, we focused on students’ modeling practices of model construction and evaluation, because these are essential processes that lead to successful and complete acquisition of the modeling competence (Chang and Chang 2013; National Research Council 2007, 2012). Both practices require an understanding of what constitutes a good scientific model, which, according to Schwarz and White (2005), is defined as “a set of representations, rules, and reasoning structures that allow one to generate predictions and explanations” (p. 166). Model critiquing involves engaging students in discussing the quality of models for evaluation and revision (Chang et al. 2010; Schwarz and Gwekwerere 2006; Schwarz and White 2005; Schwarz et al. 2009). A few studies (e.g., Chang and Chang 2013; Pluta et al. 2011) have provided evidence specific to the educational value of teaching-learning activities that involve the critiquing of models. The findings of the Chang and Chang (2013) study were particularly promising, since they revealed that eighth-grade students produced critiques of sufficient quality and developed a more sophisticated understanding of chemical reactions and scientific models, compared to their own initial conceptualization of the topic and epistemological understanding of science models and modeling. However, the authors stressed the need for more research in this direction, with the ultimate goal being the identification of what students can do when assessing peers’ models and how peer assessment, in modeling-based learning, can foster students’ model-construction practices, as well as conceptual understanding of scientific phenomena (Chang and Chang 2013; Pluta et al. 2011).

Objectives and research questions

In this study, we aimed to examine whether reciprocal peer assessment, when employed formatively, can facilitate students’ learning in science. In particular, we sought to examine how the enactment of the peer-assessor and peer-assessee roles is associated with students’ improvements on their own constructed models, after enacting reciprocal peer assessment. The research question that we sought to address was: Is there any evidence suggesting that the enactment of reciprocal peer assessment is related to secondary school students’ learning benefits in modeling-based learning in the context of light and color? For doing so, we examined, in detail (i) the quality of initial and revised students’ models, as an indication of students’ learning progress; (ii) the type of peer feedback received by assessees in relation to the quality of assessors’ initial models; and (iii) the type of peer feedback received by assessees in relation to the quality of assessees’ initial models.

Methodology

Participants

The physics teacher involved in this study volunteered to participate with his class in classroom implementation of formative assessment methods developed by the EU-funded project Assess Inquiry in Science, Technology and Mathematics Education (ASSIST-ME) (http://assistme.ku.dk/). His class comprised n = 22 students from grade 11 at a gymnasium (the highest track at upper secondary school) in Switzerland. Overall, there were almost equal numbers of girls and boys (12 girls and 10 boys). The participating students were guaranteed anonymity and that the study would not contribute to their final grade at the end of the academic year. As confirmed from the post-instructional interviews with 11 participants, most of them (n = 8) had experienced oral and / or written peer assessment in the past in different subjects.

Teaching material

The sequence was grounded in collaborative modeling-based learning, during which students were asked to work in their groups and collaboratively construct their model. The students worked through the learning material on the topic of light and color. The curriculum material required the students to work, in groups of twos (home groups), with a list of hands-on experiments on additive and subtractive color mixing. Those activities lasted four meetings of 45 min each (weeks 1 and 2). In the meeting that followed (week 3), and after having completed the experiments, students in each group were instructed to draw inferences relying on their observations and the gathered data. Their inferences were explicitly expected to lead to a scientific model which can be used to represent, interpret, and predict the additive and subtractive color mixing of light. For doing so, students were provided with a sheet of paper, color pencils, and a list of specifications that they were asked to consider when developing their model. Finally, in the last meeting (week 4), the students implemented the peer-assessment activity (model exchange, peer review, revision of models). Overall, it took the student groups six meetings (lessons) of 45 min to complete this sequence, in a total period of 4 weeks.

Procedure

Students worked in randomized pairs in most activities, and the pairs remained unchanged throughout the intervention. There were 11 groups of two students (home groups). The home groups were coded with numbers (01 to 11), and within each group, the students were also coded (as student A and student B). For example, two students assigned to one home group (e.g., group 1) were identified as students 01A and 01B.

As soon as the students had finalized their models in their home groups (see “Teaching material” section), they exchanged their models with other groups; that is, two groups reciprocally assessed their models (e.g., home group 1 exchanged its model with the model of home group 2). It has to be noted, that, due to the odd number of home groups (n = 11), group 3 assessed the model of group 4 and group 4 assessed the model of group 5. The rest of the home groups mutually assessed each others' models (see Table 3). The pairs of groups involved in the exchanges were randomly assigned by the teacher. Peer assessors used a four-point Likert rating scale with eight pre-specified assessment criteria for accomplishing the assessment task. The assessment criteria were addressing the representational, interpretive, and predictive power of the model, and thus, they were in line with the list of specifications that was given to the students prior to the model-construction phase. Assessors rated their peers’ models on all criteria in accordance with the four-point Likert scale. Along with the ratings, assessors were instructed to provide assessee groups with written feedback (for each criterion separately), in which they were to explain the reasoning behind their ratings, and provide judgments and suggestions for revisions.

The students were instructed to individually assess the model of the peer group assigned to them. For instance, students of home group 1, coded as 01A and 01B, assessed individually the model of home group 2, thereby producing two different peer-feedback sets. The teacher had a whole-class discussion with the students, for clarifying how to use the rating scale with the pre-specified assessment criteria.

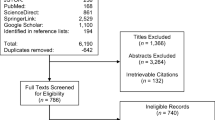

On average, it took each peer assessor 15 min to complete the assessment (SD = 2.0), as measured by the researcher (first author) who was present during the peer-assessment activity. Once the students had completed the assessment of their fellow students’ models on an individual basis, they provided the feedback that they had produced to the corresponding assessee group (see Fig. 1). Therefore, each home group received two sets of peer feedback from another peer group (e.g., home group 1, received two peer-feedback sets from home group 2: from student 02A and 02B, respectively, and vice versa). During the revision phase, students in their home groups collaboratively reviewed the two peer-feedback sets received from the corresponding assessor group. Students were free to decide on whether to make any revisions to their model. In doing so, students in their home groups first reviewed the peer-feedback comments received and through discussion they reached an agreement on whether to proceed with revisions in their models. By the end of the revision phase, students responded, in collaboration with their group mate in their home groups, to two open-ended questions which were given to them for reflection purposes (question A: “Did you use your peer’s feedback to revise your model? Explain your reasoning.” and question B: “Did you revise your model after enacting peer assessment? Explain your reasoning.”). By the end of this intervention, 11 students (each from a different home group) were interviewed individually about their experience with the peer-assessment method. Each interview lasted approximately 15 to 20 min. Students were first asked about any previous experience in peer assessment; then, they were asked whether they found peer assessment, as experienced in this study, useful assuming the role of the peer assessor and peer assessee, respectively. Interview and post-instructional questionnaire data were used for triangulation purposes.

The peer-assessment procedure. Students do all the learning activities in pairs and create their initial model. During the peer review and assessment phase, they work alone, whereas during the revisions’ phase, they work in the same pairs as before. For example, the students of home groups 1 and 2 undertake both the role of the assessors and that of the assessee, even though it is not shown in this figure

Data sources

At the beginning of the intervention, a consent form was signed by the students’ parents for allowing us to use the collected data anonymously for research purposes. The following data were collected: (i) students’ initial models, (ii) peer-feedback reports, (iii) students’ revised models, (iv) post-instructional interviews with 11 students, and (v) home-groups’ responses to the two open-ended questions at the end of the intervention. Copies of all the collected data were given to the researcher, the first author, right after the peer-assessment procedure. Copies generated from students’ initial and revised models were also given to the researcher, beforehand and after the implementation of the peer-assessment procedure, respectively.

Data analysis

A mixed-method approach was used that involved both qualitative and analayzed quantitative of the data. In particular, the data were first analyzed qualitatively and then also quantitatively with the use of the SPSS™ software, except for the interviews and students’ responses to the two open-ended questions which were only analyzed qualitatively. Each student’s learning progression was examined, as reflected in the quality of their initial and revised models, with respect to the intended learning objectives. In case of revisions applied by students, we further examined possible parameters which might have let the students proceed with the revisions in their models.

Analysis of students’ models

Each home group created one model. We analyzed the initial model and the revised model (if any) of each home group. For analyzing the initial and revised models, we examined (i) whether students drew on the relevant specifications while constructing and revising their model, (ii) the extent to which students drew on the relevant specifications in a thorough manner, and (iii) the extent to which students drew on the relevant specifications in a valid manner while constructing and revising their models. Three specifications were examined: (a) representation of the phenomenon (objects, variable quantities, processes, and relations), (b) interpretation of the phenomenon (the underlying mechanism of the phenomenon), and (c) predictive power of the model (whether it would allow predictions about the phenomenon). A four-point Likert rating scale was used for the coding process. We then clustered the models into different levels of increasing sophistication portraying each component of the modeling competence framework among learners (for a description of the levels, see Table 1 in the “Quality of initial and revised students’ models” section; examples of models assigned to different levels of the model-construction practices are provided in Fig 2). For determining the quality of students’ models, we relied on six different levels of increasing sophistication that emerged from students’ model-construction practices as suggested by Papaevripidou et al. (2014).

In addition, we coded separately (i) the number and (ii) the type of revisions identified in the revised models of students. For each home group, we further examined the two peer-feedback sets received by peer assessors, and we tried to search for connections between the revisions applied by the assessees in their models and the peer-feedback comments. Specifically, we explored the following aspects: (i) the extent to which the revisions — identified in assessees’ revised models — were based on suggestions included in peer-feedback comments that had been received by the assessees and (ii) the extent to which the peer-feedback comments suggested useful revisions (i.e., changes that could lead to significant improvements with respect to the list of specifications) that were neglected by the assessees. Finally, we coded whether any revisions emerged from the enactment of the peer-assessor role; for example, the assessees(s) borrowed a good idea from their peers’ model while they were enacting the assessor role. The findings that emerged from this analysis were triangulated with data from the post-instructional interviews and the reflective questionnaire.

Analysis of peer feedback

For analyzing peer-feedback data, we focused on qualitative aspects because it has been emphasized in prior research that qualitative feedback is more important than quantitative (e.g., Topping et al. 2000). For analyzing the written comments, we developed a coding scheme including the following dimensions: (i) comprehensiveness (whether the assessors drew on the intended assessment criteria and to which extent); (ii) validity of peer-feedback comments (their scientific accuracy and their correspondence to the models assessed); (iii) verification of peer-feedback comments (comments were perceived as “positive” when including references to what the assessees had already achieved with respect to the list of specifications; likewise, peer-feedback comments were perceived as “negative” when including references to what the assessees had yet to achieve with respect to the list of specifications); (iv) justification of positive and negative comments (i.e., justification offered by the assessor(s) on what the assessees had achieved or not yet achieved with respect to the modeling competence); and (v) guidance statements provided by the assessor(s) to the assessee(s) on how to proceed with possible revisions.

The coding scheme used for analyzing peer-feedback comments was comprised of 16 items in total. A four- or five-point Likert rating scale was used for the coding of 10 items covering dimensions (i), (ii) and (v) (e.g., for coding the degree of validity of peer-feedback comments: (1) the feedback comments were totally invalid, (2) the feedback comments were mostly invalid, (3) the feedback comments were mostly valid, and (4) the feedback comments were totally valid). For the remaining six items covering dimensions (i), (ii) and (v) (i.e., verification and justification of peer-feedback comments), we calculated the frequency of comments: positive, negative, justified and not justified positive, or justified and not justified negative. The peer-feedback comments of each student were coded separately. Each complete sentence — included in the peer-feedback comments from each student — was analyzed with respect to all the aforementioned categories (comprehensiveness, validity, verification, justification, and guidance).

Analysis of interviews and questionnaire

The data gathered from the post-instructional interviews and students’ responses to the questionnaire, which comprised two open-ended questions, were merely analyzed with open coding for triangulation purposes by the method of constant comparison (Glaser and Strauss 1967).The interviews were recorded with the consent of the interviewees. The recordings were transcribed and typed into a Word document. Then, the transcripts were imported into an Excel file, separately in different spreadsheets for each question posed during the interviews. In each spreadsheet, the first column was used to identify interviewees’ codes (e.g., 04A for interviewee from student A of group 4) and the second column included the actual response. Interviewees’ common responses were clustered into codes in a third column. Then, we compared the statements with each other and labeled similar clusters with the same code (Combs and Onwuegbuzie 2010). After the list of codes was finalized, all coding results were reviewed once more for consistency purposes. The same procedure, except transcription, was repeated for analyzing home groups’ responses to the two open-ended questions of the post-instructional questionnaire.

Inter-rater reliability

To estimate inter-rater reliability of the data coding, a second coder, who had not participated in the first round of coding, repeated the coding process for 40% of the peer-feedback data, the students’ initial and revised models, and the interview and questionnaire data. In all cases, the two raters involved in the data analysis process were also asked to justify their reasoning for their ratings and/or provide illustrative examples. For measuring the inter-rater agreement for nominal and ordinal variables, the Krippendorff’s alpha coefficient was calculated and the lowest value was found to be 0.79 for the coding of peer-feedback data and initial and revised models. For measuring the inter-rater agreement for qualitative (categorical) items, the Cohen’s kappa coefficient was calculated and the lowest value was found to be 0.8 for the coding of interview and questionnaire data. The differences in the assigned codes were resolved through discussion. All data (except data from the interviews and questionnaire) that were exposed to qualitative analysis were also coded and analyzed quantitatively through the use of non-parametric tests.

Results

The data analysis revealed that ten out of 11 home groups revised their models after the enactment of peer assessment. All revisions applied by assessees were found to improve the quality of their initial models; in other words, no case was identified in which assessees proceeded to revisions that undermined the quality of their initial model. This implies that students, as assessees, were able to filter invalid comments included in the peer feedback received.

This section about the results is further separated in three major parts presenting (i) an analysis of the quality of initial and revised students’ models, as an indication of students’ learning progress; (ii) the relation between the quality of peer-feedback comments produced by a student, while acting as an assessor, and the quality of his or her own group’s initial model, i.e., the model constructed at the end of the teaching sequence and before the peer-assessment procedure; and (iii) the relation between the type of peer feedback received by assessees and the quality of assessees’ initial models.

Quality of initial and revised students’ models

We first analyzed students’ initial models (before the enactment of peer assessment). The data analysis revealed different levels of increasing sophistication displayed by the students for each component, which align to some of the levels suggested by Papaevripidou et al. (2014). Table 1 shows six levels of increasing sophistication that illuminate the degree of development of the learners’ model-construction practices, along with the coded student groups assigned to each level. We further analyzed the students’ models with respect to the extent to which they drew on the relevant specifications in a valid manner while constructing their models. The results from this analysis are presented in Table 2.

Six student groups managed to address most of the intended specifications while constructing their own models (students assigned to level 5); even though specific cases were identified, their intended specifications were not addressed in a totally valid manner (i.e. student groups 3, 4, 7 and 8). One student group (home group 11 assigned to level 1) hardly drew on any of the specifications while constructing their model (see Table 1). Two models, assigned to level 3 (home groups 1 and 2), provided a valid and moderate representation of the phenomenon (RP), along with a valid mechanistic interpretation of how the phenomenon functions (IP), whereas one model assigned to level 3 (home group 5) included flaws, thus undermining the RP and IP of the model (see Tables 1 and 2).

Lastly, one model (home group 9) provided a valid but moderate RP of the phenomenon; no IP and predictive power (PP) elements were detected. Therefore, the model was assigned to level 2 (see Table 1). None of the groups managed to construct a model with a high PP (level 6) which could be used for predicting how the black color occurs in terms of subtractive color mixing. The analysis of students’ models provided us with some insights into how well these students mastered model-construction practices, in other words, how well they acknowledged the specifications for adequate scientific models. In addition, the analysis informed us of how well the students had conceptualized the specific phenomenon by identifying which of the specifications (RP, IP, or PP) were addressed in a valid or invalid manner in their models (see Table 2).

We then analyzed students’ revised models (after the enactment of peer assessment) (the initial and revised model of group 10 is provided as an example, see Fig. 3). The revised models of most of the student groups indicated that the students switched to a higher level of attainment in terms of all relevant aspects of their models, including the validity of those aspects (see Tables 1 and 2). A Wilcoxon rank test showed statistical significant differences between the quality of initial and revised models with respect to the degree to which students had thoroughly addressed the three specifications (RP, IP, and PP) in their models (for six levels of model-construction practices, see Table 1) (Z = −3.270; p < 0.01). Likewise, statistical significant differences were found between the validity of initial and revised models with respect to the RP (Z = −2.0; p < 0.05) and PP (Z = −3.376; p < 0.01)

The initial model (on the left) and revised model (on the right) of home group 10 are given as an example of the kind of revisions applied by assessees. Students of this group were prompted by their peer assessors to represent the primary colors of light and better illustrate and explain subtractive color mixing. They were also encouraged to revise their model, so as to allow the generation of certain predictions (e.g., How is black formed? and What is the result of mixing yellow and cyan?). Note that the text was translated from German to English

Type of peer feedback received by assessees in relation to the quality of assessors’ initial models

In Table 3, we present the pairs of assessor–assessee groups (i.e., pairs of groups who mutually assessed each other’s models). The quality of the models of all home groups, except models of home groups 6, 8, and 11, was improved with respect to the intended learning objectives after the enactment of peer assessment (see also Table 1). Even though students from home groups 6 and 8 proceeded with revisions in their model, the kind of the revisions applied did not contribute toward the improvement of the RP, IP, or PP of their models.

Assessees from home groups 2 and 4 received feedback from assessors who had created models of equal quality to them (see Table 1). The initial models of groups 6 and 7 were both assigned to the level 5, but only the revised model of students from group 7 was improved in terms of the intended specifications. Group 7 received feedback from students of group 6 and vice versa. It has to be noted that the model of group 6 was totally valid in terms of all specifications, whereas the model of group 7 was not (see Table 2). Assessees from group 9 (initial model assigned to level 2) received peer feedback from assessors of group 8 whose model was of higher quality (initial model assigned to level 5). Interestingly, groups 3 and 10 received peer feedback from assessors whose model was of a lower quality (groups 5 and 11, respectively), but still proceeded with revisions that improved the quality of their own model. For this reason, we searched for evidence with respect to the potential relationship between the type of peer feedback offered by assessors and the quality of their own initial model.

Our findings show that students assigned to different levels of model-construction practices have statistically significant differences with respect to the type of peer-feedback comments that they offered to assessees in terms of the comprehensiveness of models (Kruskal-Wallis H = 8.124; p < 0.05) (see Table 4). Students assigned to level 2 of model-construction practices (see Table 1) offered the fewest comments which were addressing the assessment criteria in a thorough manner, followed by students assigned to levels 3 and 5. Interestingly, comments provided by students assigned to level 1 (group 11) were comprehensive enough, even though those students had not addressed the given specifications in their own model in a thorough manner, and thus, their model was characterized by a superficial RP of the phenomenon, whereas IP and PP were not addressed at all. In other words, the fact that some students did not address certain specifications while developing their own model does not seem to affect their attendance to the same criteria while assessing their peers’ models. This justifies the improvement of the revised models of assessees from groups 10 and 3, considering that they received feedback from assessors from groups 11 and 5, respectively.

Verification and justification of peer-feedback comments were also found to be associated with the quality of assessors’ initial models. A Kruskal-Wallis test showed significant differences among the students — assigned to different levels in terms of their model construction practices — in the amount of negative comments included in the peer feedback they had produced (i.e., statements to what the assessees had not yet achieved) (H = 7.981; p < 0.05), as well as in the amount of justified negative comments (H = 9.166; p < 0.05). Students assigned to level 5 were those who offered the most negative (justified and not justified) comments (see Table 4). Negative comments and justified negative comments offered by assessors to assessees disclose assessors’ acknowledgment of incorrect in the theory section there are no brackets around the year or incomplete handling of aspects related to assessees’ models (for more details, see Chen et al. 2009 and Tseng and Tsai 2007). Indeed, four out of seven assessee groups (i.e., groups 4, 6, 7, and 9) received peer feedback from assessors whose initial models were assigned to level 5, and the quality of the revised models of those four groups was improved in terms of the intended specifications, after the enactment of peer assessment.

Overall, all assessors managed to provide thorough enough constructive and mostly valid feedback comments to their peers. Peer-feedback comments were found to be generally critical enough, as the assessors tended to include in their feedback comments more negative comments (M = 5.58, N = 134, SD = 2.28; illustrative quote “… the thing [referring to the color circle] with white containing all colors is not enough. Below maybe use red, blue, and green dots instead of white ones”) and fewer positive comments (M = 3.71, N = 89, SD = 2.87; illustrative quote “Good, because it is explained and graphically illustrated”), which were mostly justified. Lastly, assessors provided guidance statements (M = 5.0 comments per assessor) to the assessees on what the assessees needed to further achieve for improving the quality of their models. The guidance statements were found to be mostly valid; this means that the students, as assessors, were able not only to identify most of the weaknesses in their peers’ models, but also to guide them on the next steps that were to be taken for improving their models.

Type of peer feedback received by assessees in relation to the quality of assessees’ initial models

The type of peer-feedback comments received by assessees was found to be related with the quality of their initial models. In particular, negative comments (i.e., references in the peer-feedback comments to what the assessees had not yet achieved) (Kendall’s T b = −0.373, p < 0.05) and also justified negative comments (Kendall’s T b = −0.348, p < 0.05) were related to the quality of assessees’ initial models. Specifically, the lower the quality of an assessees’ model was — as determined by the levels of increasing sophistication toward the model-construction practices (see Table 1) — the more negative and more justified negative comments (i.e., justified references to what assessees group had not yet achieved with respect to model-construction practices) were offered by peer assessors.

The data analysis revealed that all revisions (in terms of the student group’s attainment of the modeling competence) identified in the revised models of three groups (groups 3, 8, and 10) were suggested in the peer feedback received, whereas in the revised models of seven groups (groups 1, 2, 4, 5, 6, 7, and 9), only some revisions were suggested in the peer feedback received. No revisions were detected in the model on home group 11. In other words, we identified revisions in the models of seven groups which were not suggested in the peer-feedback comments received from peer assessors (see Table 3). For example, students from group 1 added an explanation (i.e., “if green and red coincide, yellow is formed”) in their revised model which was not included in the peer-feedback comments offered by their peer assessors (students from group 2). When examining the initial model of group 2 (peer assessors), we detected a sentence resembling the revision of assessee group 1 (i.e., “We have three sources of light: blue, red, and green. If they coincide, one of the colors shown in the figure is formed”).Footnote 1 This is an indication that students from group 1 might have borrowed this idea while they were assessing the model of group 2.

Triangulation — with data from the post-instructional reflective questionnaire and the interviews with the students — confirmed those indications. In Table 5, we present the different themes that emerged during the qualitative analysis of those data sources. The results confirm that students proceeded to revise their models, not only due to the reception of peer-feedback comments but also due to the enactment of the peer-assessor role.

Interviewees from all groups stated that they used the peer-feedback comments received from their peers for revising their own models, except interviewee from group 11, who confirmed that no revisions were applied in their model, after the enactment of peer assessment. Interviewees from groups 1, 2, 4, 5, 6, 7, and 9 confirmed that, after the enactment of peer assessment, they proceeded with revisions in their models, relying not only on the peer feedback received but also on the experience that they had gained while acting as peer assessors (e.g., engagement to self-reflection processes, exposure to alternative examples while assessing peers’ models).

Discussion

This study focused on examining how reciprocal peer assessment in modeling-based learning can serve as a learning tool for learners in a secondary school physics course, in the context of light and color. The findings of this study show that reciprocal peer assessment — experienced by the students in the roles of assessor and assessee — enhanced their learning in the selected topic, as inferred by the quality of their revised models. It is vital to consider that between the model-construction and the model-revision phase, no instruction or any other kind of intervention took place; therefore, any possible improvements identified in students’ revised models arose due to the enactment of peer assessment and in particular either due to the experience that students gained while acting as peer assessors or due to the exploitation of peer feedback received in the peer-assessee role or both.

Students, as peer assessors, were found to have the beginning skills of providing effective feedback comments to their peers. Particular characteristics of peer feedback (verification and justification) offered by assessors were found to be associated with the quality of assessees’ models. More specifically, the lower the quality of assessees’ initial models, the more negative and justified negative peer-feedback comments were offered by assessors. This indicates that assessors could identify flaws and shortcomings in assessees’ models (thus offering negative comments), justify their comments, and provide suggestions for improvements (offering guidance), which were mostly scientifically accurate and consistent throughout. Suggestions and recommendations for possible ways for improvement (Hovardas et al. 2014; Sadler 1998; Strijbos and Sluijsmans 2010), as well as justified comments (Gielen et al. 2010; Narciss 2008; Narciss and Huth 2006), have been identified by researchers as essential characteristics of effective peer feedback.

Interestingly, the amount of (justified) negative comments offered by peer assessors to peer assessees differed among assessors who had initially constructed models of different quality. Those discrepancies subsequently affected the revisions applied by assesses in their models. In fact, assessees who received feedback from assessors whose initial models were assigned to level 5, were those who revised their models in a way that was reflecting a higher level of attainment toward the learning objectives. First, these findings designate the implications that the pairing of assessor–assessee groups might have on the effectiveness of peer-assessment activities. Second, the findings suggest that the certain characteristic peer feedback that students produce is associated with the quality of their own constructed models. It has to be noted that students were provided with scaffolds while enacting the peer-assessor role, which might have helped them to redefine the learning objectives and reconsider the task’s specifications. Therefore, peer assessment bares benefits for students’ learning, also because students are given the opportunity to reconsider the learning objectives while acting as reviewers.

Students, as assessees, acted on most or all suggestions provided by their peers for revising their models. Students in this study were not reluctant to accept their peers as legitimate assessors, contradicting findings from previous studies (e.g., Smith, Cooper and Lancaster 2002; Tsivitanidou et al. 2011; van Gennip et al. 2010; ). They used the peer feedback received from their peer assessors for revising their models, and those revisions improved the quality of their models in terms of their representational, interpretative, and predictive power, as well as in terms of the scientific accuracy of their models. They were able to wisely use the peer feedback received, by filtering peer-feedback comments and finally proceeding with revisions that improved the quality of their initial model with respect to the intended specifications. Students did not proceed with revisions which could potentially undermine the quality of their model. It seems that students in this study were having the skills to interpret feedback in a meaningful way and it use it wisely for improving their models. In fact, the analysis revealed that even in cases of receiving invalid feedback comments, assessees were able to filter such invalid comments, as already suggested in previous studies (Hovardas et al. 2014).

However, not all revisions detected in assessees’ revised models were explicitly or implicitly suggested in the peer-feedback comments received. We searched for evidence about the possible reasons which might have led assessees to applying those revisions in their models. The data analysis indicated that assessees revised their models also due to the opportunity to act as assessors. In particular, the findings of this study suggest that when students enact the peer-assessor role, they are exposed to alternative examples (i.e., their peers’ artifacts) (Tsivitanidou and Constantinou 2016a, b), which might inspire students to further revise their own artifacts. Also, the students reconsider the learning objectives that should have been addressed and therefore better appreciate what is required to achieve a particular standard, while enacting the peer-assessor role (Brindley and Scoffield 1998). Moreover, the findings of this study suggest that when students enact the peer-assessor role, they are also engaged in self reflection processes. Peer assessment, as a process itself, requires self-reflection and in-depth thinking (Cheng et al. 2015). Indeed, students in this study claimed in the post-instructional interviews that while assessing their peers’ models, they were engaged in self-reflection processes. They also reported that the opportunity which was given to them to compare—at least implicitly—their own model with that of another peer group while assessing made them realize what they had interpreted wrongly or not on the basis of their experimental results. This comparison strategy applied by assessors in this study resembles the comparative judgment approach which has been reported as a method that assessors may endorse when offering peer feedback, even if not instructed to do so (Tsivitanidou and Constantinou 2016a). Tsivitanidou and Constantinou (2016a) showed that assessors may benefit in their learning process when given the opportunity to assess their peers’ work, as they are engaged in self-reflection processes comparing their own work with that of their peers. Accordingly, the idea of assessing peers’ models was fruitful in the sense that assessors had a visual and linguistic access to how their peers had represented and interpreted the phenomenon under study, in other words, how their peers had conceptualized the phenomenon.

In this vein, receiving peer feedback while also providing peer feedback was beneficial for students’ learning progress. Previous studies in science education have shown that students can benefit from peer assessment for improving their artifacts (e.g., Prins et al. 2005; Tsai et al. 2002). The findings of this study suggest that those benefits can also arise in modeling-based learning. We can argue that reciprocal peer assessment can serve as a learning method, confirming findings of previous studies in other contexts and teaching approaches (Orsmond et al. 1996), since students in this study benefited from the reciprocal peer-assessment method, not only because of receiving peer feedback but also because they were given the opportunity to act as assessors. The findings of this study have implications for practice and policy. The fact that reciprocal peer assessment in modeling-based learning can facilitate students’ learning in science needs to be considered, first, by policymakers and second, by educators, for integrating peer assessment and modeling-based learning in the curriculum and in the everyday teaching practice, respectively.

This study was carried out in the context of a regular physics course, thus offering ecological validity to the aforementioned findings. On the other hand, this study was an exploratory one with limitations related to the small sample size (n = 22 students). Future studies will need to replicate the findings of the present study with a bigger sample size and in other contexts (i.e., while students exchange feedback for their own constructed models on phenomena and concepts which might require higher-order thinking skills to be analyzed and interpreted). Finally, it would have been useful to record the discussion between students in their home groups while reviewing the peer feedback received from their assessors. This source of data would have allowed us to detect other variables which might have influenced students’ actions during the revision phase.

Peer assessment in modeling-based learning constitutes an area that calls for further research on a number of aspects with the collective goal to identify how peer assessment can potentially contribute to acquiring and enhancing modeling practices but also how it can enhance learning in science. Further research toward this direction is also needed for further exploring the potential benefits that peer assessment may entail in this context.

Notes

It has to be noted that all the quotes presented in this manuscript were translated from German to English.

References

Ballantyne, R., Hughes, K., & Mylonas, A. (2002). Developing procedures for implementing peer assessment in large classes using an action research process. Assessment & Evaluation in Higher Education, 27, 427–441.

Bell, B., & Cowie, B. (2001). The characteristics of formative assessment in science education. Science Education, 85(5), 536–553.

Black, P., & William, D. (1998). Assessment and classroom learning. Assessment in Education: Principles, Policy, and Practice, 5, 7–74.

Brindley, C., & Scoffield, S. (1998). Peer assessment in undergraduate programmes. Teaching in Higher Education, 3(1), 79–89.

Cestone, C. M., Levine, R. E., & Lane, D. R. (2008). Peer assessment and evaluation in team-based learning. New Directions for Teaching and Learning, 116, 69–78.

Chang, H. Y., & Chang, H. C. (2013). Scaffolding students’ online critiquing of expert- and peer-generated molecular models of chemical reactions. International Journal of Science Education, 35(12), 2028–2056.

Chang, H.-Y., Quintana, C., & Krajcik, J. S. (2010). The impact of designing and evaluating molecular animations on how well middle school students understand the particulate nature of matter. Science Education, 94(1), 73–94.

Chang, C.-C., Tseng, K.-H., Chou, P.-N., & Chen, Y.-H. (2011). Reliability and validity of web-based portfolio peer assessment: a case study for a senior high school’s students taking computer course. Computers and Education, 57(1), 1306–1316.

Chen, N.-S., Wie, C.-W., Wu, K.-T., & Uden, L. (2009). Effects of high level prompts and peer assessment on online learners’ reflection levels. Computers and Education, 52, 283–291.

Cheng, K. H., Liang, J. C., & Tsai, C. C. (2015). Examining the role of feedback messages in undergraduate students’ writing performance during an online peer assessment activity. The Internet and Higher Education, 25, 78–84.

Cho, Y. H., & Cho, K. (2011). Peer reviewers learn from giving comments. Instructional Science, 39(5), 629–643.

Combs, J. P., & Onwuegbuzie, A. J. (2010). Describing and illustrating data analysis in mixed research. International Journal of Education, 2(2), 1–23.

Crane, L., & Winterbottom, M. (2008). Plants and photosynthesis: peer assessment to help students learn. Journal of Biological Education, 42, 150–156.

El-Mowafy, A. (2014). Using peer assessment of fieldwork to enhance students’ practical training. Assessment and Evaluation in Higher Education, 39(2), 223–241.

Evans, C. (2013). Making sense of assessment feedback in higher education. Review of Educational Research, 83, 70–120.

Falchikov, N. (2003). Involving students in assessment. Psychology Learning and Teaching, 3, 102–108.

Falchikov, N., & Goldfinch, J. (2000). Student peer assessment in higher education: a meta-analysis comparing peer and teacher marks. Review of Educational Research, 70, 287–322.

van Gennip, N. A. E., Segers, M. S. R., & Tillema, H. H. (2010). Peer assessment as a collaborative learning activity: the role of interpersonal variables and conceptions. Learning and Instruction, 20(4), 280–290.

Gielen, M., & De Wever, B. (2015). Scripting the role of assessor and assessee in peer assessment in a wiki environment: impact on peer feedback quality and product improvement. Computers & Education, 88, 370–386.

Gielen, S., Peeters, E., Dochy, F., Onghena, P., & Struyven, K. (2010). Improving the effectiveness of peer feedback for learning. Learning and Instruction, 20, 304–315.

Glaser, B. G., & Strauss, A. L. (1967). The discovery of grounded theory: strategies for qualitative research. Chicago: Aldine.

Hanrahan, S. J., & Isaacs, G. (2001). Assessing self- and peer-assessment: the students’ views. Higher Education Research and Development, 20, 53–70.

Harlen, W. (2007). Holding up a mirror to classroom practice. Primary Science Review, 100, 29–31.

Harrison, C., & Harlen, W. (2006). Children’s self- and peer-assessment. In W. Harlen (Ed.), A guide to primary science education (pp. 183–190). Hatfield: Association for Science Education.

Hattie, J., & Timperley, H. (2007). The power of feedback. Review of Educational Research, 77, 81–112.

Hodson, D. (1993). Re-thinking old ways: towards a more critical approach to practical work in school science. Studies in Science Education, 22(1), 85–142.

Hovardas, T., Tsivitanidou, O. E., & Zacharia, Z. C. (2014). Peer versus expert feedback: an investigation of the quality of peer feedback among secondary school students. Computers & Education, 71, 133–152.

Hwang, G. J., Hung, C. M., & Chen, N. S. (2014). Improving learning achievements, motivations and problem-solving skills through a peer assessment-based game development approach. Educational Technology Research and Development, 62(2), 129–145.

Jaillet, A. (2009). Can online peer assessment be trusted? Educational Technology & Society, 12(4), 257.

Kaberman, Z., & Dori, Y. J. (2009). Question posing, inquiry, and modeling skills of chemistry students in the case-based computerized laboratory environment. International Journal of Science and Mathematics Education, 7(3), 597–625.

Kim, M. (2005). The effects of the assessor and assessee’s roles on preservice teachers’ metacognitive awareness, performance, and attitude in a technology-related design task. (Unpublished doctoral dissertation). Tallahassee: Florida State University.

Kollar, I., & Fischer, F. (2010). Peer assessment as collaborative learning: a cognitive perspective. Learning and Instruction, 20(4), 344–348.

Kyza, E. A., Constantinou, C. P., & Spanoudis, G. (2011). Sixth graders’ co-construction of explanations of a disturbance in an ecosystem: exploring relationships between grouping, reflective scaffolding, and evidence-based explanations. International Journal of Science Education, 33(18), 2489–2525.

Labudde, P. (2000). Konstruktivismus im Physikunterricht der Sekundarstufe II (constructivism in physics instruction at the upper secondary level). Bern: Haupt.

Latour, B., & Woolgar, S. (1979). An anthropologist visits the laboratory. In B. Latour & S. Woolgar (Eds.), Laboratory life: The construction of scientific facts (pp. 43–90). Princeton, NJ: Princeton University Press.

Li, L., Liu, X., & Steckelberg, A. L. (2010). Assessor or assessee: how student learning improves by giving and receiving peer feedback. British Journal of Educational Technology, 41(3), 525–536.

Lin, S. S. J., Liu, E. Z. F., & Yuan, S. M. (2001). Web-based peer assessment: feedback for students with various thinking styles. Journal of Computer Assisted Learning, 17, 420–432.

Lindsay, C., & Clarke, S. (2001). Enhancing primary science through self- and paired-assessment. Primary Science Review, 68, 15–18.

Looney, J. W. (2011). Integrating formative and summative assessment: progress toward a seamless system? (OECD Education Working Papers No. 58). doi: 10.1787/5kghx3kbl734-en.

Lynch, M. (1990). The externalized retina: Selection and mathematization in the visual documentation of objects in the life sciences. In M. Lynch & S. Woolgar (Eds.), Representation in scientific practice (pp. 153 –186). Cambridge, MA: The MIT Press.

Narciss, S. (2008). Feedback strategies for interactive learning tasks. In J. M. Spector, M. D. Merrill, J. van Merriënboer, & M. P. Driscoll (Eds.), Handbook of research on educational communications and technology (3rd ed., pp. 125–143). New York: Erlbaum.

Narciss, S., & Huth, K. (2006). Fostering achievement and motivation with bug-related tutoring feedback in a computer-based training for written subtraction. Learning and Instruction, 16, 310–322.

National Research Council. (2007). Taking science to school: learning and teaching science in grades K–8. Washington: National Academies Press.

National Research Council. (2012). A framework for K–12 science education: practices, crosscutting concepts, and core ideas. Committee on a conceptual framework for new K–12 science education standards. Board on science education, division of behavioral and social sciences and education. Washington: The National Academies Press.

Nicol, D. J., & Macfarlane-Dick, D. (2006). Formative assessment and self-regulated learning: a model and seven principles of good feedback practice. Studies in Higher Education, 31(2), 199–218.

Nicolaou, C. T. (2010). Συνεργασίακαιμοντελοποίησησεμαθησιακάπεριβάλλοντα [Modelling and collaboration in learning environments] (in Greek, doctoral dissertation). Nicosia: University of Cyprus, (ISBN: 978-9963-689-84-2).

Nicolaou, C. T., & Constantinou, C. P. (2014). Assessment of the modeling competence: a systematic review and synthesis of empirical research. Educational Research Review, 13, 52–73.

Orsmond, P., Merry, S., & Reiling, K. (1996). The importance of marking criteria in the use of peer-assessment. Assessment and Evaluation in Higher Education, 21(3), 239–250.

Papaevripidou, M. (2012). Teachers as learners and curriculum designers in the context of modeling-centered scientific inquiry (doctoral dissertation). Nicosia: University of Cyprus, (ISBN: 978–9963–700-56-1).

Papaevripidou, M., Nicolaou, C. T., & Constantinou, C. P. (2014). On defining and assessing learners’ modelling competence in science teaching and learning. Philadelphia: Annual Meeting of American Educational Research Association (AERA).

Pluta, W. J., Chinn, C. A., & Duncan, R. G. (2011). Learners’ epistemic criteria for good scientific models. Journal of Research in Science Teaching, 48(5), 486–511.

Prins, F. J., Sluijsmans, D. M. A., Kirschner, P. A., & Strijbos, J.-W. (2005). Formative peer assessment in a CSCL environment: a case study. Assessment & Evaluation in Higher Education, 30, 417–444.

Saari, H., & Viiri, J. (2003). A research-based teaching sequence for teaching the concept of modelling to seventh-grade students. International Journal of Science Education, 25(11), 1333–1352.

Sadler, D. R. (1998). Formative assessment: revisiting the territory. Assessment in Education, 5, 77–84.

Schwarz, C. V., & Gwekwerere, Y. N. (2006). Using a guided inquiry and modeling instructional framework (EIMA) to support K–8 science teaching. Science Education, 91(1), 158–186.

Schwarz, C. V., & White, B. Y. (2005). Metamodeling knowledge: developing students’ understanding of scientific modeling. Cognition and Instruction, 23(2), 165–205.

Schwarz, C. V., Reiser, B. J., Davis, E. A., Kenyon, L., Acher, A., Fortus, D., et al. (2009). Developing a learning progression for scientific modeling: making scientific modeling accessible and meaningful for learners. Journal of Research in Science Teaching, 46(6), 632–654.

Sluijsmans, D. M. A. (2002). Student involvement in assessment, the training of peer assessment skills. Groningen: Interuniversity Centre for Educational Research.

Sluijsmans, D. M. A., Brand-Gruwel, S., & van Merriënboer, J. J. G. (2002). Peer assessment training in teacher education: effects on performance and perceptions. Assessment and Evaluation in Higher Education, 27, 443–454.

Smith, H., Cooper, A., & Lancaster, L. (2002). Improving the quality of undergraduate peer assessment: a case for student and staff development. Innovations in Education and Teaching International, 39(1), 71–81.

Strijbos, J. W., & Sluijsmans, D. (2010). Unravelling peer assessment: methodological, functional, and conceptual developments. Learning and Instruction, 20(4), 265–269.

Topping, K. (1998). Peer assessment between students in colleges and universities. Review of Educational Research, 68(3), 249–276.

Topping, K. J. (2003). Self and peer assessment in school and university: reliability, validity and utility. In M. Segers, F. Dochy, & E. Cascaller (Eds.), Optimising new modes of assessment: in search of qualities and standards (pp. 55–87). Dordrecht: Kluwer Academic Publishers.

Topping, K., Smith, F. F., Swanson, I., & Elliot, A. (2000). Formative peer assessment of academic writing between postgraduate students. Assessment and Evaluation in Higher Education, 25, 149–169.

Tsai, C.-C., Lin, S. S. J., & Yuan, S.-M. (2002). Developing science activities through a network peer assessment system. Computers & Education, 38(1–3), 241–252.

Tseng, S.-C., & Tsai, C.-C. (2007). On-line peer assessment and the role of the peer feedback: a study of high school computer course. Computers and Education, 49, 1161–1174.

Tsivitanidou, O. E., & Constantinou, C. P. (2016a). A study of students' heuristics and strategy patterns in web-based reciprocal peer assessment for science learning. The Internet and Higher Education, 29, 12–22.

Tsivitanidou, O., & Constantinou, C. (2016b). Undergraduate students’ heuristics and strategy patterns in response to web-based peer and teacher assessment for science learning. In M. Vargas (Ed.), Teaching and Learning: Principles, Approaches and Impact Assessment (pp. 65–116). New York: Nova Science Publishers, (ISBN: 978–1–63485-228-9).

Tsivitanidou, O. E., Zacharia, Z. C., & Hovardas, T. (2011). Investigating secondary school students’ unmediated peer assessment skills. Learning and Instruction, 21(4), 506–519.

Van Lehn, K. A., Chi, M. T., Baggett, W., & Murray, R. C. (1995). Progress report: towards a theory of learning during tutoring. Pittsburgh: Learning Research and Development Center, University of Pittsburg.

Walker, M. (2015). The quality of written peer feedback on undergraduates’ draft answers to an assignment, and the use made of the feedback. Assessment & Evaluation in Higher Education, 40(2), 232–247.

Acknowledgements

This study was conducted in the context of the research project ASSIST-ME, which is funded by the European Union’s Seventh Framework Programme for Research and Development (grant agreement no. 321428).

Author information

Authors and Affiliations

Corresponding author

Additional information

Olia Tsivitanidou. Learning in Science Group, Department of Educational Sciences, University of Cyprus, P. O. Box 20537, 1678 Nicosia, CY, Cyprus. Email: tsivitanidou.olia@ucy.ac.cy

Current themes of research:

Reciprocal peer-assessment processes in (computer-supported) learning environments. Inquiry-based learning and teaching in science. Science communication research.

Most relevant publications:

Tsivitanidou, O. E., & Constantinou, C. P. (2016). A study of students’ heuristics and strategy patterns in web-based reciprocal peer assessment for science learning. The Internet and Higher Education, 29, 12–22.

Tsivitanidou, O., & Constantinou, C. (2016). Undergraduate students’ heuristics and strategy patterns in response to web-based peer and teacher assessment for science learning. In Malcolm Vargas (Ed.), Teaching and Learning: Principles, Approaches and Impact Assessment. (pp. 65–116). New York: Nova Science Publishers, (ISBN: 978-1-63485-228-9).

Hovardas, T., Tsivitanidou, O. E., & Zacharias, C. Z. (2014). Peer versus expert feedback: investigating the quality of peer feedback among secondary school students assessing each other’s science web-portfolios. Computers & Education, 71, 133–152.

Tsivitanidou, O. E., & Zacharias, C. Z., & Hovardas, T. (2011). Investigating secondary school students’ unmediated peer assessment skills, Learning and Instruction, 21 (4), 506–519.

Costas P. Constantinou. Learning in Science Group, Department of Educational Sciences, University of Cyprus, P. O. Box 20537, 1678 Nicosia, CY, Cyprus. Email: c.p.constantinou@ucy.ac.cy

Current themes of research:

Interaction. Thinking. Metacognition in the context of inquiry-oriented science learning.

Most relevant publications:

Nicolaou, C. T., & Constantinou, C. P. (2014). Assessment of the modeling competence: a systematic review and synthesis of empirical research. Educational Research Review, 13, 52–73.

Iordanou, K., & Constantinou, C. P. (2014). Developing pre-service teachers’ evidence-based argumentation skills on socio-scientific issues. Learning and Instruction, 34, 42–57.

Kyza, E. A., Constantinou, C. P., & Spanoudis, G. (2011). Sixth graders’ co-construction of explanations of a disturbance in an ecosystem: exploring relationships between grouping, reflective scaffolding, and evidence-based explanations. International Journal of Science Education, 33(18), 2489–2525.

Papadouris, N., & Constantinou, C. P. (2010). Approaches employed by sixth-graders to compare rival solutions in socio-scientific decision-making tasks. Learning and Instruction, 20(3), 225–238.

Papadouris, N., & Constantinou, C. P. (2010). Approaches employed by sixth-graders to compare rival solutions in socio-scientific decision-making tasks. Learning and Instruction, 20(3), 225–238.

Peter Labudde. Centre for Science and Technology Education, School of Education, University of Applied Sciences and Arts North-western Switzerland, Steinentorstrasse 30, Basel 4051, Switzerland. Email: peter.labudde@fhnw.ch

Current themes of research:

Learning and teaching processes in science education. Empirical teaching research. Interdisciplinary teaching and learning. Teacher professional development and gender studies.

Most relevant publications:

Börlin, J. & Labudde, P. (2014): Swiss PROFILES Delphi study: implication for future developments in science education in Switzerland. In: C. Bolte; J. Holbrook; R. Mamlok-Naaman & F. Rauch (Eds). Science Teachers’ Continuous Professional Development in Europe. Case Studies from the PROFILES Project (pp. 48–58). Berlin: Freie Universität Berlin.

Fischer, H.E.; Labudde, P.; Neumann, K; & Viiri, J. (Eds., 2014): Quality of Instruction in Physics, Comparing Finland, Germany and Switzerland. Münster: Waxmann.

Labudde, P. & Delaney, S. (2016): Experiments in science instruction: for sure! Are we really sure? In: I. Eilks; S. Markic & B. Ralle (Eds.). Science Education Research and Practical Work (p. 193–204). Aachen: Shaker.

Labudde, P.; Nidegger, C.; Adamina, M. & Gingins, F. (2012): The development, validation, and implementation of standards in science education: chances and difficulties in the Swiss project HarmoS. In: Bernholt, S.; Neumann, K. & Nentwig, P. (Hrsg.): Making it tangible: learning outcomes in science education (pp. 235–259). Münster, New York, München, Berlin: Waxmann.

Mathias Ropohl. Leibniz Institute for Science and Mathematics Education, Olshausenstrasse 62, 24118 Kiel, Germany. Kiel University, Olshausenstr. 75, 24118 Kiel, Germany. Email: ropohl@ipn.uni-kiel.de

Current themes of research:

Formative and summative assessment methods of students’ competencies in chemistry. Analysis of the use of media in science education. Professionalization of teacher candidates in the first and second phase of pre-service teacher education.

Most relevant publications:

Rönnebeck, S., Bernholt, S., & Ropohl, M. (2016). Searching for a common ground—a literature review of empirical research on scientific inquiry activities. Studies in Science Education, 52(2), 161-197. doi:10.1080/03057267.2016.1206351.

Walpuski, M., Ropohl, M., & Sumfleth, E. (2011). Students’ knowledge about chemical reactions – development and analysis of standard-based test items. Chemistry Education: Research and Practice, 12, 174-183.doi:10.1039/C1RP90022F

Ropohl, M., Walpuski, M., & Sumfleth, E. (2015). Welches Aufgabenformat ist das richtige?—Empirischer Vergleich zweier Aufgabenformate zur standardbasierten Kompetenzmessung. Zeitschrift für Didaktik der Naturwissenschaften, 21, S. 1–15. doi:10.1007/s40573-014-0020-6.

Silke Rönnebeck. Leibniz Institute for Science and Mathematics Education, Olshausenstrasse 62, 24118 Kiel, Germany. Kiel University, Olshausenstr. 75, 24118 Kiel, Germany. Email: sroennebeck@uv.uni-kiel.de

Current themes of research:

The fifth author is currently working as a Research Scientist at Kiel University. Her research interests include the assessment of scientific competencies in international large-scale assessments (PISA), formative assessment, inquiry-based teaching and learning, and effective teacher professional development.

Most relevant publications:

Rönnebeck, S., Bernholt, S., & Ropohl, M. (2016). Searching for a common ground—A literature review of empirical research on scientific inquiry activities. Studies in Science Education, 52(2), 161-197. doi:10.1080/03057267.2016.1206351.

Rönnebeck, S., Schöps, K., Prenzel, M., Mildner, D. & Hochweber, J. (2010). Naturwissenschaftliche Kompetenz von PISA 2006 bis PISA 2009. In E. Klieme, C. Artelt, J. Hartig, N. Jude, O. Köller, M. Prenzel, W. Schneider & P. Stanat (Hrsg.), PISA 2009 - Bilanz nach einem Jahrzehnt (S. 177–198). Münster: Waxmann.

Nentwig, P., Roennebeck, S., Schoeps, K., Rumann, S., & Carstensen, C. (2009). Performance and levels of contextualization in a selection of OECD countries in PISA 2006. Journal of Research in Science Teaching 46(8), 897–908.

Rights and permissions

About this article

Cite this article

Tsivitanidou, O.E., Constantinou, C.P., Labudde, P. et al. Reciprocal peer assessment as a learning tool for secondary school students in modeling-based learning. Eur J Psychol Educ 33, 51–73 (2018). https://doi.org/10.1007/s10212-017-0341-1

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10212-017-0341-1