Abstract

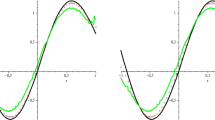

In this paper, we develop a constructive theory for approximating absolutely continuous functions by series of certain sigmoidal functions. Estimates for the approximation error are also derived. The relation with neural networks approximation is discussed. The connection between sigmoidal functions and the scaling functions of \(r\)-regular multiresolution approximations are investigated. In this setting, we show that the approximation error for \(C^1\)-functions decreases as \(2^{-j}\), as \(j \rightarrow + \infty \). Examples with sigmoidal functions of several kinds, such as logistic, hyperbolic tangent, and Gompertz functions, are given.

Similar content being viewed by others

1 Introduction

In this paper, we develop a new theory, for approximating uniformly functions in some class by series of sigmoidal functions, i.e., functions \(\sigma : \mathbb{R }\rightarrow \mathbb{R }\) such that \(\lim _{x\rightarrow -\infty } \sigma (x) = 0\) and \(\lim _{x\rightarrow +\infty }\sigma (x) = 1\). The idea is to start from appropriate real-valued functions, \(\phi \), normalized so that \(\int _{\mathbb{R }}\phi (t) \, dt = 1\), and to construct sigmoidal functions having the integral form \(\sigma _{\phi }(x) := \int _{-\infty }^x \phi (t) \, dt,\,x \in \mathbb{R }\). In this way, we can define the operators

\(x \in [a,b]\), where \(f\) is an absolutely continuous function on \([a,b] \subset \mathbb{R }\), and \(w > 0\) (note that (I) becomes trivial for constants \(f\)).

We can show that, the family \((S_w^{\sigma _{\phi }} f)_{w>0}\) converges to \(f\) uniformly on \([a,b]\). Moreover, we derive estimates for the approximation error and the truncation error of the series.

A remarkable result is obtained when \(\phi \) is the real-valued wavelet-scaling function associated with an \(r\)-regular multiresolution approximation of \(L^2(\mathbb{R })\), constructed by a suitable procedure, see [11, 17, 29, 30]. In this setting, we replace the weights \(w\) with \(2^j,\,j \in \mathbb{N }^+\), as it seems more natural in view of the relation that \(\phi \) has with the multiresolution approximation. Also in this case, we can show that the family of the operators \((S_{j}^{\sigma _{\phi }} f)_{j \in \mathbb{N }^{+}}\), converges to \(f\) as \(j \rightarrow +\infty \), uniformly on \([a,b]\). Approximating \(C^1-\)functions, we obtain an approximation error decreasing to zero as \(2^{-j}\) when \(j \rightarrow + \infty \).

The approximation procedures based on sigmoidal functions find applications, for instance, in the theory of neural networks (NNs). NNs arise as a practical technique, successfully adopted to model a number of real-world problems, are often used in Approximation Theory as “universal approximators” and have the form

where \(x \cdot w_k := \sum _{i=1}^n x_i w_{k_i}\) denotes the inner product in \(\mathbb{R }^n\), the \(w_k\)’s are the weights, the \(\theta _k\)’s are threshold values, and \(\sigma \) is a sigmoidal activation function.

A theory for approximating functions by NNs, defined by (II), was developed by Cybenko in [16], and its feasibility was established by nonconstructive arguments. Often, \(\sigma \) is either the well-known logistic function, or the sigmoidal function generated by the hyperbolic tangent, see [1, 2, 8]. The theory of NNs is mainly multivariate in nature, but useful constructive approximation results have been obtained also for univariate functions, see, e.g., [1, 2, 9, 14, 19, 22, 33]. Basic results on NNs were established by Li, Lenze, Mhaskar, Micchelli and Pinkus in [23, 26, 27, 31, 32, 34]. For results concerning the order of approximation, see [3, 10, 13, 15, 20, 24, 25]. One-dimensional NNs also play a role in numerical analysis. For instance, they have been used to solve ordinary differential equations [28], or to solve Fredholm or Volterra integral equations of the second kind [7, 12]. In this context, available constructive approximation algorithms are fundamental.

The theory for approximating certain functions by series of sigmoidal functions proposed in this paper can be exploited to obtain some kind of NNs approximation. Such an approach is completely new and allows us to obtain a constructive approximation algorithm based on a new class of sigmoidal functions.

Such a theory, in the present form, however, does not cover the important cases of NNs activated by either logistic, hyperbolic tangent or Gompertz sigmoidal functions. Therefore, in Sect. 5, we propose an extension of the theory previously developed, which includes such cases, also providing estimates for the approximation errors for functions belonging to the Lipschitz class.

2 Approximation by series of sigmoidal functions

In what follows, we denote by \(C[a,b]\) and \(AC[a,b]\) the sets of all continuous and absolutely continuous functions, \(f: [a,b] \rightarrow \mathbb{R }\), on the bounded closed nonempty interval \([a,b]\), respectively; \(\Vert \cdot \Vert _{\infty }\) is the usual sup norm \(\Vert f \Vert _{\infty } := \max _{x \in [a,b]}|f(x)|\). Moreover, \({\widehat{C}}^n[a,b],\,n \in \mathbb{N }^+\), will denote the set of all functions \(f \in C^n(a',b')\), for some open real interval \((a',b')\), such that \([a,b] \subset (a',b')\).

Let us introduce the class of functions we will work with.

Definition 2.1

The function \(\phi :\mathbb{R }\rightarrow \mathbb{R }^+_0\) is said to belong to the class \(\Phi \), if it satisfies the following conditions:

-

\((\varphi 1)\, \phi \) is continuous on \(\mathbb{R }\) and there exists \(C > 0\) such that

$$\begin{aligned} \phi (x) \le C (1 + |x|)^{-\alpha }, \end{aligned}$$for every \(x \in \mathbb{R }\), and for some \(\alpha \ge 2\);

-

\((\varphi 2)\) \(\sum _{k \in \mathbb{Z }}\phi (x - k) = 1\), for every \(x \in \mathbb{R }\).

Remark 2.2

The condition \((\varphi 2)\) is equivalent to

where \({\widehat{\phi }}(v) := \int _{\mathbb{R }} \phi (t) \, e^{-ivt} \, dt,\,v \in \mathbb{R }\), is the Fourier transform of \(\phi \); see [6]. In particular, it turns out that \({\widehat{\phi }}(0) = \int _{\mathbb{R }} \phi (t) \, dt = 1\).

For any fixed \(\phi \in \Phi \), the function \(K_{\phi }: \mathbb{R }^2\rightarrow \mathbb{R }^+_0\), defined by

will be called the kernel associated to \(\phi \). Clearly, it follows from condition \((\varphi 2)\) and by Remark 2.2 that

Moreover, using \((\varphi 1)\), it is easy to see that

for some positive constant \(L\). Under the previous assumptions on \(K_{\phi }\), the following lemma, which will turn out to be useful later, could be established. Its proof is classical and can be found in [30].

Lemma 2.3

Let \((T_w)_{w>0}\) be the family of operators defined explicitly by

for \(f: \mathbb{R }\rightarrow \mathbb{R }\) (or \(\mathbb{C }\)), and where the kernel \(K: \mathbb{R }^2 \rightarrow \mathbb{R }\) (or \(\mathbb{C }\)) meets the conditions (2) and (3). Then, for any uniformly continuous and bounded function \(f\), we have

Moreover, for every \(f \in L^p(\mathbb{R }),\,1 \le p < +\infty \), it results

Recall now the following

Definition 2.4

A function \(\sigma : \mathbb{R }\rightarrow \mathbb{R }\) is called a “sigmoidal function”, whenever \( \lim _{x \rightarrow -\infty } \sigma (x) = 0\) and \(\lim _{x \rightarrow +\infty } \sigma (x) = 1\).

Sometimes, boundedness, continuity and/or monotonicity are prescribed in addition. Let now \(\phi \in \Phi \) be fixed and define the function \(\sigma _{\phi }:\mathbb{R }\rightarrow \mathbb{R }^+_0\) as

Clearly, from condition \((\varphi 2)\) and Remark 2.2, such a function \(\sigma _{\phi }\) is a sigmoidal function. We can now give the following

Definition 2.5

For every fixed function \(\phi \in \Phi \), we define the family of operators \((S_w^{\sigma _{\phi }})_{w>0}\) by

for every \(f \in AC[a,b]\) and \(w > 0\). We call \(S_w^{\sigma _{\phi }} f\) the “series of sigmoidal functions for \(f\), based on \(\phi \)”, for the given value of \(w > 0\).

Clearly, when \(f\) is a constant function, the Definition 2.5 becomes trivial. Now, we can prove the following

Theorem 2.6

Let \(\phi \in \Phi \) be fixed. For any given \(f \in AC[a,b]\), the family \((S_w^{\sigma _{\phi }} f)_{w>0}\) converges uniformly to \(f\) on \([a,b]\), i.e.,

Moreover, if \(f \in {\widehat{C}}^1[a,b]\), we have

for some positive constant \({\widetilde{C}}\) and for every \(w > 0\).

Proof

Since \(f \in AC[a,b],\,f(x)= \int ^x_a f'(z)\, dz\, + f(a)\) for every \(x \in [a,b]\). Then, setting \({\widetilde{f}}'(z) = f'(z)\) for \(z \in [a,b]\) and \({\widetilde{f}}'(z) = 0\) for \(z \notin [a,b]\), we obtain

Changing variable, by setting \(t = w z - k\), we get

Being \({\widetilde{f}}' \in L^1(\mathbb{R })\), we obtain by Lemma 2.3 and inequality (5)

which completes the proof of the first part of the theorem.

Consider now \(f \in {\widehat{C}}^1[a,b]\). Note that, by conditions \((\varphi 2)\) and (2), we have

Then, again from inequality (5), we obtain

Changing the variables \(z\) and \(y\) in the last integral in (6) with \(z_1/w\) and \(y_1/w\), respectively, we obtain, in view of condition (3),

for every \(w > 0\), for some \(\widetilde{C}>0\), and where \(\alpha \ge 2\) is the constant of condition \((\varphi 1)\). This completes the proof of the second part of the theorem. \(\square \)

Examples of functions \(\phi \in \Phi \) will be given in the next sections.

3 Application to neural networks

Here, we give some applications of the theory developed in the previous sections to NNs of the form (II). Below, we will study NNs of the type in (II) in a univariate setting and activated by the sigmoidal functions generated by (4). We will denote by \(\Phi _C\) the subset of \(\Phi \) of functions having a compact support.

Let \(\phi \in \Phi _C\) be fixed, and let \(M_1,\,M_2 > 0\) such that \(\text{ supp } \, \phi \subseteq [-M_1,M_2]\). In this case, we have for any \(f \in AC[a,b]\) and \(w > 0\),

for every \(k < w a - M_2\) and \(k > w b + M_1,\,k \in \mathbb{Z }\), since for these values of \(k,\,[w a - k,w b - k] \cap [-M_1,M_2] = \emptyset \). Then, the series appearing in the definition of the operator \(S_w^{\sigma _{\phi }} f\) reduces to a finite sum, i.e.,

for every \(x \in [a,b]\), where the functions \(\left\lceil x \right\rceil \) and \(\left\lfloor x \right\rfloor \) denote the upper and the lower integer part of \(x \in \mathbb{R }\), respectively. Now, we introduce the following modification in definition 2.5 for the case \(\phi \in \Phi _C\). For any \(f \in AC[a,b]\), set

for every \(x \in [a,b]\) and \(w > 0\). The \(G_w^{\sigma _{\phi }} f\)’s are a kind of NNs. They approximate \(f\), uniformly on \([a,b]\), as \(w \rightarrow +\infty \). The proof of this claim follows from the same arguments made in Theorem 2.6, taking into account that

for \(w>0\) sufficiently large. Indeed, by the definition of \(\sigma _{\phi }\), for every \(w > M_2\) we have

Moreover, again by Theorem 2.6, if \(f \in {\widehat{C}}^1[a,b]\) we obtain the convergence rate given by \(\Vert G_w^{\sigma _{\phi }} f - f \Vert _{\infty } \le \widetilde{C} w^{-1}\), for some positive constants \(\widetilde{C}\) and for every sufficiently large \(w > 0\).

Our work provides a unified approach for NNs approximations. In addition, our proofs are constructive in nature and allow us to determine explicitly the form of the NN. In particular, we show that the set of NNs \(G_w^{\sigma _{\phi }} f\) is dense in the set \(AC[a,b]\), with respect to the uniform norm.

Now, we show that we can obtain NNs also starting from functions \(\phi \in \Phi \) which are not necessarily compactly supported. Let first prove the following

Lemma 3.1

The series \(\sum _{k \in \mathbb{Z }}\phi (wx-k)\) converges uniformly on the compact subsets of \(\mathbb{R }\), for every fixed \(w > 0\).

In particular, we have for every \([a,b] \subset \mathbb{R }\)

for some \(\overline{C}>0\), for every \(N > w \max \left\{ |a|, |b| \right\} ,\,N \in \mathbb{N }^+\), where \(\alpha \ge 2\) is the constant of condition \((\varphi 1)\).

Proof

Let \([a,b] \subset \mathbb{R }\) be fixed. By condition \((\varphi 1)\) and for \(N > w \max \left\{ |a|, |b| \right\} \) we have

The proof then follows. \(\square \)

We can now establish the following

Theorem 3.2

-

(i)

For any \(f \in AC[a,b]\), we denote by

$$\begin{aligned} (G_{N,w}^{\sigma _{\phi }} f)(x)&:= \sum _{k=-N}^N \left[ \int \limits _a^b \phi (w y - k) \, f'(y) \, dy \right] \sigma _{\phi }(w x - k) \nonumber \\&+ f(a) \, \sigma _{\phi }(w(x - a + 1)), \end{aligned}$$(10)for \(x \in [a,b],\, w > 0\), and \(N \in \mathbb{N }^+\). Then, for every \(\varepsilon > 0\) there exist \(w > 0\) and \(N \in \mathbb{N }^+\) such that

$$\begin{aligned} \Vert G_{N,w}^{\sigma _{\phi }} f - f \Vert _{\infty } < \varepsilon . \end{aligned}$$ -

(ii)

Moreover, for any \(f \in {\widehat{C}}^1[a,b]\) we have

$$\begin{aligned} \Vert G_{N,w}^{\sigma _{\phi }} f - f \Vert _{\infty }&\le C_1 \left\{ (N-wb+1)^{-(\alpha -1)} + (N+wa+1)^{-(\alpha -1)}\right\} \\&+ C_2 \, w^{-1} + C_3 \, w^{-(\alpha -1)}, \end{aligned}$$for some constants \(C_1,\,C_2,\,C_3 \!>\! 0\), and for every \(w \!>\! 0\) with \(N \!>\! w \max \left\{ |a|,\, |b| \right\} ,\,N \!\in \! \mathbb{N }^+\), where \(\alpha \ge 2\) is the constant appearing in condition \((\varphi 1)\).

Proof

-

(i)

Let \(\varepsilon > 0\) be fixed. For every \(x \in [a,b]\) we have

$$\begin{aligned}&|(G_{N,w}^{\sigma _{\phi }} f)(x) - f (x)| \le |(G_{N,w}^{\sigma _{\phi }} f)(x) - (S_w^{\sigma _{\phi }} f)(x)| + |(S_w^{\sigma _{\phi }} f)(x) - f (x)| \nonumber \\&\quad \le \sum _{|k|>N} \left[ \int \limits _a^b \phi (w y - k) \, |f'(y)| \, dy \right] \sigma _{\phi }(w x - k) + |f(a)||1 - \sigma _{\phi }(w(x - a + 1))|\nonumber \\&\qquad + \Vert S_w^{\sigma _{\phi }} f - f \Vert _{\infty } =: S_1 + S_2 + S_3. \end{aligned}$$(11)

Proceeding as in (8) and using \((\varphi 1)\), we can write

where \(\alpha \ge 2\) is the constant appearing in condition \((\varphi 1)\), and \(\underline{C} > 0\), then \(S_2 < \varepsilon \) for \(w > 0\) sufficiently large. Moreover, we obtain from Theorem 2.6 that \(S_3 < \varepsilon \) for \(w > 0\) sufficiently large. Finally, we can estimate \(S_1\). Being \(\Vert \sigma _{\phi } \Vert _{\infty } \le 1\), we obtain for \(S_1\)

We have by Lemma 3.1, for every fixed and sufficiently large \(w > 0\),

for some constant \(\overline{C} > 0\) and for every \(N > w \max \left\{ |a|, |b| \right\} \) with \(N \in \mathbb{N }^+\). Then, for \(N\) sufficiently large, we obtain \(S_1 < \varepsilon \). This completes the proof of (i).

-

(ii)

For any \(f \in {\widehat{C}}^1[a,b]\), Theorem 2.6 shows that \(S_3 \le {\widetilde{C}} w^{-1}\) uniformly with respect to \(x \in [a,b]\), for every \(w > 0\). Moreover, we obtain by (12) and (14)

$$\begin{aligned} S_1 + S_2 + S_3&\le \overline{C} \left[ \int \limits _a^b |f'(y)| \, dy \right] \left\{ (N-wb+1)^{-(\alpha -1)} \right. \\&\quad + \left. (N+wa+1)^{-(\alpha -1)} \right\} + \widetilde{C} \, w^{-1} + \underline{C} \, (1 + w)^{-(\alpha -1)}\\&\le C_1 \left\{ (N-wb+1)^{-(\alpha -1)} + (N+wa+1)^{-(\alpha -1)} \right\} \\&\quad + C_2 \, w^{-1} + C_3 \, w^{-(\alpha -1)}, \end{aligned}$$uniformly with respect to \(x \in [a,b]\), for some constants \(C_1,\,C_2,\,C_3 > 0\), and for \(w > 0\) sufficiently large, with \(N > w \max \left\{ |a|, \,|b|\right\} \). \(\square \)

Remark 3.3

Setting \(C_3 = 0\) in Theorem 3.2 (ii), we also obtain an estimate for the truncation error for the series of sigmoidal functions introduced in Sect. 2. Note that, when the weight, \(w\), increases, we need a higher number of neurons, \(N\), which depends on \(w\).

We now construct few examples of sigmoidal functions, \(\sigma _{\phi }\), providing first some examples of functions \(\phi \in \Phi _C\) satisfying all hypotheses of our theory. Recall that the “central B-splines” of order \(n \in \mathbb{N }^+\), are defined as

where \((x)_+ := \max \left\{ x, 0 \right\} \) is the positive part of \(x \in \mathbb{R }\) [5]. The Fourier transform of \(M_n\) is given by

where the \(sinc\) function is defined by

The \(M_n\)’s are bounded and continuous on \(\mathbb{R }\) for all \(n \in \mathbb{N }^+\), and are compactly supported on \([-n/2,n/2]\). This implies that \(M_n \in L^1(\mathbb{R })\) and satisfies condition \((\varphi 1)\) for every \(\alpha \ge 2\). Finally, condition \((\varphi 2)\) holds, in view of Remark 2.2, hence, \(M_n \in \Phi _C\) for every \(n \in \mathbb{N }^+\). Therefore, we can construct explicitly the NNs \(G_w^{\sigma _{M_n}} f,\,n \in \mathbb{N }^+\).

As an example of function \(\phi \in \Phi \) which is not compactly supported, consider the continuous function

Clearly, \(F(x) = \mathcal{O }(x^{-2-\varepsilon })\) as \(x \rightarrow \pm \infty ,\,\varepsilon > 0\), hence, \(F\) satisfies condition \((\varphi 1)\) with \(\alpha =2\), see [5]. Moreover, its Fourier transform is

(see [5] again). By Remark 2.2, \(F\) satisfies also condition \((\varphi 2)\), and then \(F \in \Phi \).

Remark 3.4

Note that the theory developed in this section cannot be applied to the case of NNs activated by the logistic functions, \(\sigma _{\ell }(x) := (1 + e^{-x})^{-1}\) (see [4, 21], e.g., for applications to Demography and Economics), or to the hyperbolic tangent sigmoidal functions, \(\sigma _h(x) := \frac{1}{2} + \frac{1}{2} \tanh (x) = \frac{1}{2} + \frac{e^{2x} - 1}{2 (e^{2x} + 1)}\), [1, 2, 8]. In fact, \(\sigma _{\ell }\) and \(\sigma _h\) can be generated by (4) from \(\phi _{\ell }(x) := e^{-x} (1 + e^{-x})^{-2}\) and \(\phi _h(x) := 2 e^{2x} (e^{2x} + 1)^{-2}\), respectively. However, \(\widehat{\phi _{\ell }}(v) = \pi v /\sinh (\pi v)\) and \(\widehat{\phi _{h}}(v) = \pi v/(2 \sinh (\pi v/2))\), respectively, which do not meet the condition in Remark 2.2, i.e., do not satisfy condition \((\varphi 2)\). In Sect. 5 below, an extension of the theory developed above is proposed, which allows to use NNs activated by \(\sigma _{\ell }\) or \(\sigma _h\).

4 Sigmoidal functions and multiresolution approximation

In this section, we will show a connection between the theory of multiresolution approximation and our theory for approximating functions by series of sigmoidal functions. We first recall some basic facts concerning the multiresolution approximation. For the detailed theory, see [11, 17, 29, 30, 36]. We start recalling the following

Definition 4.1

A multiresolution approximation of \(L^2(\mathbb{R })\) is an increasing sequence, \(V_j,\,j \in \mathbb{Z }\), of linear closed subspaces of \(L^2(\mathbb{R })\), enjoying the following properties:

for all \(f \in L^2(\mathbb{R })\) and all \(j \in \mathbb{Z }\),

for all \(f \in L^2(\mathbb{R })\) and all \(k \in \mathbb{Z }\),

there exists a function, \(h(x) \in V_0\), such that the sequence

Recall that a sequence of functions \((h_k)_{k \in \mathbb{Z }}\) is a Riesz basis of an Hilbert space, \(H \subseteq L^2(\mathbb{R })\), if there exist two constants, \(C_1\) and \(C_2\), with \(C_1 > C_2 > 0\), such that, for every sequence of real or complex numbers \((a_k)_{k \in \mathbb{Z }} \in l^2(\mathbb{Z })\), it turns out that

and the vector space of finite linear combinations of \(h_k\), is dense in \(H\).

Definition 4.2

A multiresolution approximation, \(V_j,\,j \in \mathbb{Z }\), is called r-regular \((r \in \mathbb{N }^+)\), if the function \(h\) in (18) is such that \(h \in C^r(\mathbb{R })\) and

for each integer \(m \in \mathbb{N }^+\) and for every positive index \(i \le r\).

For every \(r\)-regular multiresolution approximation \(V_j,\,j \in \mathbb{Z }\), we can define the function \(\phi \in L^2(\mathbb{R })\), called scaling function, as

In [30, Ch. 2], it is proved that \(\sum _{k \in \mathbb{Z }} |{\widehat{h}}(v + 2 \pi k)|^2 \ge c > 0\), hence, \(\phi \) is well-defined. Moreover, by the regularity of \(h\), we have, as a consequence of the Sobolev’s embedding theorem, that \(\sum _{k \in \mathbb{Z }} |{\widehat{h}}(v + 2 \pi k)|^2\) is a \(C^{\infty }(\mathbb{R })\) function. Furthermore, the family \((\phi (x-k))_{k \in \mathbb{Z }}\) turns out to be an orthonormal basis of \(V_0\), [17, 30], and from (16) and (17), we obtain by a simple change of scale that \((2^{j/2} \phi (2^jx-k))_{k \in \mathbb{Z }}\) forms an orthonormal basis of \(V_j\).

Now, by smoothness and periodicity of \(\left( \sum _{k \in \mathbb{Z }} |{\widehat{h}}(v + 2 \pi k)|^2 \right) ^{-1/2}\), the latter can be written by means of its Fourier series \(\sum _{k \in \mathbb{Z }} \alpha _k e^{i k v}\), where the coefficients \(\alpha _k\) decrease rapidly. We thus obtain \({\widehat{\phi }}(v) = \left( \sum _{k \in \mathbb{Z }} \alpha _k e^{i k v} \right) {\widehat{h}}(v)\) which gives \(\phi (x) = \sum _{k \in \mathbb{Z }} \alpha _k \, h(x+k)\), and then it follows that the scaling function \(\phi \) satisfies the estimates in (19). In particular, we have

for some \(\widetilde{C}_{\alpha } > 0\), for every integer \(\alpha \in \mathbb{N }^+\), i.e., \(\phi \) satisfies condition \((\varphi 1)\) for every \(\alpha \in \mathbb{N }^+\).

Let now \(E_j\) be the orthogonal projection of \(L^2(\mathbb{R })\) onto \(V_j\), given by

where \(\overline{\phi }\) is the complex conjugate of \(\phi \). Let define \(E(x,y) := \sum _{k \in \mathbb{Z }} \overline{\phi }(y-k) \, \phi (x-k)\), the kernel of the projection operator \(E_0\), hence, \(2^j \, E(2^jx,2^jy),\,j \in \mathbb{Z }\) will be the kernel of the projection operator \(E_j\).

Again in [30], it is proved the following remarkable property for the kernel, \(E\),

for every integer \(\alpha \in \mathbb{N }\) and \(\alpha \le r\). From (23) with \(\alpha = 0\), the integral property

follows. Moreover, since \(\phi \) satisfies (21), it is easy to see that

where \(\overline{C}_{\alpha }>0\). Hence, \(E\) is a bivariate kernel satisfying conditions (2) and (3). Then, by Lemma 2.3, we infer that \(\Vert E_j f - f \Vert _p \rightarrow 0\) as \(j \rightarrow + \infty \), for every \(f \in L^p(\mathbb{R })\) and \(1 \le p < \infty \). Moreover, exploiting the properties of the projection operators \(E_j\), the quantity

can be defined, which satisfies the condition \(\Sigma (x,0) = 1\), for every \(x \in \mathbb{R }\), [30]. This yields

Now, we can adjust the scaling function \(\phi \) merely multiplying \({\widehat{\phi }}\) by a suitable constant of modulus \(1\) so that \({\widehat{\phi }}(0) = \int _{\mathbb{R }} \phi (t) \, dt = 1\), while preserving all the other properties, [30]. By the regularity of \(\phi \), the Poisson summation formula holds, and from (24), we obtain

i.e., the scaling function \(\phi \) satisfies condition \((\varphi 2)\). Using (4), we can now consider the function \(\sigma _{\phi }\) constructed by the scaling function \(\phi \). Clearly, if \(\phi \) is real valued, \(\sigma _{\phi }\) turns out to be a sigmoidal function. Then, we have the following

Theorem 4.3

Let \(\phi \) be a real-valued scaling function like that constructed above, associated with an \(r\)- regular multiresolution approximation of \(L^2(\mathbb{R })\).

-

(i)

Then, for any \(f \in AC[a,b]\), the sequence of operators \((S_j^{\sigma _{\phi }} f)_{j \in \mathbb{N }^+}\), defined by

$$\begin{aligned} (S_j^{\sigma _{\phi }} f)(x) := \sum _{k \in \mathbb{Z }} \left[ \int \limits _a^b \phi (2^j y - k) \, f'(y) \, dy \right] \sigma _{\phi }(2^j x - k) + f(a), \end{aligned}$$for every \(x \in [a,b]\), converges uniformly to \(f\) on \([a,b]\). In particular, if \(f \in {\widehat{C}}^1[a,b]\), we have

$$\begin{aligned} \Vert S_j^{\sigma _{\phi }} f - f \Vert _{\infty } \le C \, 2^{-j}, \end{aligned}$$for some positive constant \(C\) and for every positive integer \(j\).

-

(ii)

Denote by \(S_{N, j}^{\sigma _{\phi }} f,\,N \in \mathbb{N }^+\), the truncated series \(S_j^{\sigma _{\phi }} f\), i.e.,

$$\begin{aligned} (S_{N,j}^{\sigma _{\phi }} f)(x) := \sum _{k = -N}^N \left[ \int \limits _a^b \phi (2^j y - k) \, f'(y) \, dy \right] \sigma _{\phi }(2^j x - k) + f(a). \end{aligned}$$Then, for every \(f \in {\widehat{C}}^1[a,b]\), we have

$$\begin{aligned} \Vert S_{N,j}^{\sigma _{\phi }} f - f \Vert _{\infty } \le C_1 \, 2^{-j} + C_{2, \alpha } \left\{ (N-2^jb+1)^{-(\alpha -1)} + (N+2^j a+1)^{-(\alpha -1)}\right\} , \end{aligned}$$for some positive constants \(C_1\) and \(C_{2,\alpha }\), for every \(j \in \mathbb{N }^+\), and \(N > 2^j \max \left\{ |a|, |b| \right\} \), where \(\alpha \in \mathbb{N }^+\) is an arbitrary integer.

The proof of Theorem 4.3 (i) follows as the proof of Theorem 2.6, taking into account that, the sequence \((E_j f)_{j \in \mathbb{Z }},\,f \in L^1(\mathbb{R })\), converges to \(f\) in \(L^1(\mathbb{R })\). Moreover, the proof of Theorem 4.3 (ii) follows, as the proof of Theorem 3.2 (ii), using condition (21) and Lemma 3.1, where we have \(2^j\) in place of \(w\).

Remark 4.4

Note that, in the special setting of \(r\)-regular multiresolution approximations, we are able to prove that the real-valued scaling functions \(\phi \), constructed above, are such that \(\phi \in \Phi \). Moreover, condition (16) in definition 4.1 allows us to consider the weights in the basis \((2^{j/2} \phi (2^jx-k))_{k \in \mathbb{Z }}\), and then in the series \(S_j^{\sigma _{\phi }}f\), as \(2^j\), i.e., the weights increase exponentially with respect to \(j\). Then, the error of approximation of \(C^1\)-functions decreases as \(2^{-j}\). Moreover, conditions (19) and (21) are crucial to prove that the truncation error also decrease rapidly.

Examples of \(r\)-regular multiresolution analysis satisfying the conditions above can be given, assuming \(h\) to be generated by spline wavelets of order \(r+1\). These are defined by

which can be viewed just as shifted central B-spline \(M_n\). Generally speaking, the definition of \(h_n\) is given in terms of convolution, i.e., \(h_n\) can be defined as the convolution of \(r+1\) characteristic functions of the interval \([0,1)\), see [35]. Note that, also the central B-spline can be defined similarly, in terms of convolutions of the characteristic functions of the interval \([-1/2,1/2)\), see [5]. The Fourier transform of \(h_r\) can be easily obtained by

The scaling function \(\phi \) associated with the spline wavelet multiresolution approximation can be obtained using (20) and the normalization procedure described above, see [18, 30, 35].

5 An extension of the theory for neural networks approximation

The theory developed in the previous sections, concerning the approximation by means of series of sigmoidal functions based on \(\sigma _{\phi }\) is beset by the technical difficulty of checking that \(\phi \) satisfies condition \((\varphi 2)\). To this purpose, we could use the condition given in Remark 2.2. However, this does not simplify the problem. In fact, evaluating the Fourier transform of a given function is often a difficult task. Moreover, as noticed in Remark 3.4, the sigmoidal functions most used for NN approximation do not satisfy \((\varphi 2)\). Below, we propose an extension of the theory developed in the previous sections, aiming at obtaining approximations with NNs activated by sigmoidal functions \(\sigma _{\phi }\), without assuming that condition \((\varphi 2)\) be satisfied by \(\phi \).

Through this section, we consider functions \(\phi : \mathbb{R }\rightarrow \mathbb{R }^+_0\), with \(\int _{\mathbb{R }} \phi (t)\, dt = 1\) and satisfying condition \((\varphi 1)\) with \(\alpha > 2\). Moreover, we set

and assume in addition that \(\psi _{\phi }\) satisfies:

for every \(t \in \mathbb{R }\) and some \(A > 0\). We denote by \(\mathcal{T }\) the set of all functions \(\phi \) satisfying such conditions. We can now prove the following

Lemma 5.1

For any given \(\phi \in \mathcal{T }\), the relation

holds.

Proof

Let \(x \in \mathbb{R }\) be fixed. Then,

since the sum is telescopic. Passing to the limit for \(N \rightarrow +\infty \), we obtain immediately

\(\square \)

Let now introduce the bivariate kernel

As made in Sect. 2 for the kernel \(K_{\phi }\), we can show, using Lemma 5.1 and conditions \((\varphi 1)\) and \((\Psi 1)\), that \(K_{\phi , \psi }\) satisfy both, (2) and (3). Now, for any given \(\phi \in \mathcal{T }\), we consider the family of operators \((F_w^{\phi })_{w>0}\), defined by

for every bounded \(f: \mathbb{R }\rightarrow \mathbb{R },\,w > 0\).

Remark 5.2

Note that, by Lemma 2.3, for every uniformly continuous and bounded function \(f\), the family of operators \((F_w^{\phi }f)_{w>0}\) converges uniformly to \(f\) on \(\mathbb{R }\), as \(w\rightarrow +\infty \).

To study the order of approximation for the operators above, we define the Lipschitz class of the Zygmund type we will work with. Let us define

for every \(0 < \nu \le 1\). We can now prove the following lemma concerning the order of approximation of \((F_w^{\sigma _{\phi }}f)_{w>0}\) to \(f(x)\):

Lemma 5.3

Let \(f \in \text{ Lip }(\nu ),\,0 < \nu \le 1\), be a fixed bounded function. Then, there exist \(C_1 > 0\) and \(C_2 > 0\) such that

for every sufficiently large \(w > 0\).

Proof

Let \(x \in \mathbb{R }\) be fixed. Since \(f \in \text{ Lip }(\nu )\), there exist \(M > 0\) and \(\gamma > 0\) such that

for every \(|t| \le \gamma \). Moreover, we infer from condition (2)

and then we can write

Let first estimate \(J_1\). From (3) and (26), by the change of variable \(y = (t/w) + x\), and being \(f \in \text{ Lip }(\nu )\), we obtain for \(w > 0\) sufficiently large

where \(\widetilde{L} > 0\) is a suitable constant. Now, since \(\alpha > 2\), we have \(\widetilde{L} \int _{\mathbb{R }}(1+|t|)^{-\alpha } \, |t|^{\nu } \, dt =: C_1 < +\infty \), then \(J_1 \le C_1 \, w^{-\nu }\), for \(w>0\) sufficiently large. Moreover, setting \(t = w y\) and using again condition (3), we have

where \(\overline{L}\) is a suitable positive constant. Changing now the variable \(t\) into \(z\), setting \(z = t - w x\) in the last integral, we obtain

for every \(w > 0\). This completes the proof. \(\square \)

We can now prove the following

Theorem 5.4

Let \(\phi \in \mathcal{T }\) be fixed. Define the NNs

where \(w > 0,\,N \in \mathbb{N }^+\), and \(f: \mathbb{R }\rightarrow \mathbb{R }\) is a bounded function on \(\mathbb{R }\).

-

(i)

Let \(f \in C[a,b]\) be fixed. Then, for every \(\varepsilon > 0\), there exist \(w > 0\) and \(N > w \max \left\{ |a|,\, |b| \right\} \), such that

$$\begin{aligned} \Vert N_{N,w}^{\phi } {\widetilde{f}} - f \Vert _{\infty } = \sup _{x \in [a,b]}|(N_{N,w}^{\phi } {\widetilde{f}})(x) - f(x)| < \varepsilon , \end{aligned}$$where \({\widetilde{f}}\) is a continuous extensions of \(f\) such that \({\widetilde{f}}\) has compact support and \({\widetilde{f}} = f\) on \([a,b]\).

-

(ii)

Let \(f \in Lip(\nu ),\,0<\nu \le 1\), and \([a,b] \subset \mathbb{R }\) be fixed. Then, we have

$$\begin{aligned}&\Vert N_{N,w}^{\phi } f - f \Vert _{\infty } = \sup _{x \in [a,b]}|(N_{N,w}^{\phi } f)(x)-f(x)| \\&\quad \le C_1 \, w^{- \nu } + C_2 \, w^{-(\alpha -1)} + C_3 \left\{ (N-wb+1)^{-(\alpha -1)} + (N+wa+1)^{-(\alpha -1)} \right\} , \end{aligned}$$for every sufficiently large \(w>0\) and \(N > w \max \left\{ |a|, |b|\right\} \), for some positive constants \(C_1,\,C_2\), and \(C_3\).

Proof

-

(i)

Suppose for the sake of simplicity that \(\Vert f\Vert _{\infty } = \Vert {\widetilde{f}}\Vert _{\infty }\), and note that \({\widetilde{f}}\) is uniformly continuous. Let now \(\varepsilon > 0\) and \(x \in [a,b]\) be fixed. We can write

$$\begin{aligned} |(N_{N,w}^{\phi } {\widetilde{f}})(x) - f(x)|&\le |f(x) - (F_{w}^{\phi } {\widetilde{f}})(x)| + |(F_{w}^{\phi } {\widetilde{f}})(x) - (N_{N,w}^{\phi } {\widetilde{f}})(x)| \\&=: I_1 + I_2. \end{aligned}$$By Remark 5.2 we have \(I_1 < \varepsilon \) for \(w > 0\) sufficiently large. Moreover,

$$\begin{aligned} I_2 \le \sum _{|k|> N} w \left[ \int \limits _{\mathbb{R }} \phi (w y - k) \, |{\widetilde{f}}(y)| \, dy \right] \psi _{\phi }(w x - k). \end{aligned}$$Hence, \(w \int \limits _{\mathbb{R }} \phi (w y - k)\, dy = 1\), and since \((\Psi 1)\) holds, we obtain for \(\psi _{\phi }\) the same estimate given in Lemma 3.1 for \(\phi \), then for every fixed sufficiently large \(w > 0\) we have

$$\begin{aligned} I_2&\le \Vert f \Vert _{\infty } \sup _{x \in [a,b]} \sum _{|k|> N} \left[ w \int \limits _{\mathbb{R }} \phi (w y - k) \, dy \right] \psi _{\phi }(w x - k)\nonumber \\&= \Vert f \Vert _{\infty } \left[ \sup _{x \in [a,b]} \sum _{|k|>N} \psi _{\phi }(w x - k) \right] \nonumber \\&< \Vert f \Vert _{\infty } \widetilde{C} \left\{ (N-wb+1)^{-(\alpha -1)} + (N+wa+1)^{-(\alpha -1)} \right\} < \varepsilon , \end{aligned}$$(27)for some positive constant \(\widetilde{C},\,N \in \mathbb{N }^+,\,N > w \max \left\{ |a|, |b|\right\} \), and then, (i) is proved being \(\varepsilon >0\) arbitrary.

-

(ii)

Let now \(f \in \text{ Lip }(\nu )\) be a fixed. We have by Lemma 5.3

$$\begin{aligned} I_1 \le C_1 \, w^{- \nu } + C_2 \, w^{-(\alpha -1)}, \end{aligned}$$for every sufficiently large \(w > 0\) and for some positive constants \(C_1\) and \(C_2\). Moreover, we obtain from (27)

$$\begin{aligned} I_2 \le C_3 \left\{ (N-wb+1)^{-(\alpha -1)} + (N+wa+1)^{-(\alpha -1)} \right\} , \end{aligned}$$for a suitable constant \(C_3 > 0\). Then, the second part of the theorem is proved.

\(\square \)

As a first example, we can consider the case of the logistic function, \(\sigma _{\ell }\) (see, e.g., [8]), generated by \(\phi _{\ell }(x) := e^{-x} (1 + e^{-x})^{-2}\). Clearly, conditions \((\varphi 1)\) and \((\Psi 1)\), are fulfilled, since \(\phi _{\ell }\) and

decay exponentially as \(x \rightarrow \pm \infty \). A second example, is given by the hyperbolic tangent sigmoidal function (see, e.g., [1, 2]),

This can be generated by \(\phi _h(x) = 2 \, e^{2 x} \, (e^{2 x} + 1)^{-2}\), whose associated function \(\psi _{h}\) is

It can be easily checked that such a function \(\phi _h\) belongs to \(\mathcal{T }\).

Finally, we recall that another remarkable example of sigmoidal function is provided by the class of Gompetz functions, defined by

for \(\alpha ,\,\beta > 0\). Gompertz functions are widely used in such fields as, for instance, demography and in modeling tumor growth.

Remark 5.5

Note that, in closing, in order to approximate functions by the NNs \(G^{\sigma _{\phi }}_{N,w}\), the half of the number of sigmoidal functions needed to approximate functions by the NNs \(N^{\phi }_{N,w}\), would now suffice. The theory developed in this section, however, can be applied to important sigmoidal functions for which the theory earlier discussed in Sects. 2 and 3 cannot be applied.

References

Anastassiou, G.A.: Univariate hyperbolic tangent neural network approximation. Math. Comput. Model. 53(5–6), 1111–1132 (2011)

Anastassiou, G.A.: Multivariate hyperbolic tangent neural network approximation. Comput. Math. Appl. 61(4), 809–821 (2011)

Barron, A.R.: Universal approximation bounds for superpositions of a sigmoidal function. IEEE Trans. Inf. Theory 39(3), 930–945 (1993)

Brauer, F., Castillo Chavez, C.: Mathematical Models in Population Biology and Epidemiology. Springer, New York (2001)

Butzer, P.L., Nessel, R.J.: Fourier Analysis and Approximation. Birkhauser Verlag, Bassel and Academic Press, New York (1971)

Butzer, P.L., Splettstößer, W., Stens, R.L.: The sampling theorem and linear prediction in signal analysis. Jahresber. Deutsch. Math.-Verein 90, 1–70 (1988)

Buzhabadi, R., Effati, S.: A neural network approach for solving Fredholm integral equations of the second kind. Neural Comput. Appl. 21, 843–852 (2012)

Cao, F., Chen, Z.: The approximation operators with sigmoidal functions. Comput. Math. Appl. 58(4), 758–765 (2009)

Chen, H., Chen, T., Liu, R.: A constructive proof and an extension of Cybenko’s approximation theorem. In: Computing Science and Statistics, pp. 163–168. Springer, New York (1992)

Chen, D.: Degree of approximation by superpositions of a sigmoidal function. Approx. Theory Appl. 9(3), 17–28 (1993)

Chui, C.K.: An Introduction to Wavelets, Wavelet Analysis and its Applications 1. Academic Press Inc., Boston (1992)

Costarelli, D., Spigler, R.: Solving Volterra integral equations of the second kind by sigmoidal functions approximations, to appear in J. Integral Eq. Appl. 25(2) (2013)

Costarelli, D., Spigler, R.: Constructive approximation by superposition of sigmoidal functions. Anal. Theory Appl. 29(2), 169–196 (2013)

Costarelli, D., Spigler, R.: Approximation results for neural network operators activated by sigmoidal functions. Neural Netw. 44, 101–106 (2013)

Costarelli, D., Spigler, R.: Multivariate neural network operators with sigmoidal activation functions. Neural Netw. 48, 72–77 (2013)

Cybenko, G.: Approximation by superpositions of a sigmoidal function. Math. Control Signals Syst. 2, 303–314 (1989)

Daubechies, I.: Ten Lectures on Wavelets, Regional Conference Series in Applied Mathematics 61. Society for Industrial and Applied Mathematics, SIAM, Philadelphia (1992)

De Boor, C.: A Practical Guide to Spline, Applied Mathematical Sciences 27. Springer, New York (2001)

Gao, B., Xu, Y.: Univariant approximation by superpositions of a sigmoidal function. J. Math. Anal. Appl. 178, 221–226 (1993)

Hahm, N., Hong, B.: Approximation order to a function in \(\overline{C}({\mathbb{R}})\) by superposition of a sigmoidal function. Appl. Math. Lett. 15, 591–597 (2002)

Hritonenko, N., Yatsenko, Y.: Mathematical Modelling in Economics, Ecology and the Environment. Science Press, Beijing (2006)

Jones, L.K.: Constructive approximations for neural networks by sigmoidal functions, Technical Report Series 7. University of Lowell, Dep. of Mathematics (1988)

Lenze, B.: Constructive multivariate approximation with sigmoidal functions and applications to neural networks. In: Numer. Methods Approx. Theory, Birkhauser Verlag, Basel-Boston-Berlin, pp. 155–175 (1992)

Lewicki, G., Marino, G.: Approximation by superpositions of a sigmoidal function. Z. Anal. Anwendungen J. Anal. Appl. 22(2), 463–470 (2003)

Lewicki, G., Marino, G.: Approximation of functions of finite variation by superpositions of a sigmoidal function. Appl. Math. Lett. 17, 1147–1152 (2004)

Li, X.: Simultaneous approximations of multivariate functions and their derivatives by neural networks with one hidden layer. Neurocomputing 12, 327–343 (1996)

Li, X., Micchelli, C.A.: Approximation by radial bases and neural networks. Numer. Algorithms 25, 241–262 (2000)

Malek, A., Shekari Beidokhti, R.: Numerical solution for high order differential equations using a hybrid neural network—optimization method. Appl. Math. Comput. 183, 260–271 (2006)

Mallat, S.G.: Multiresolution approximations and wavelet orthonormal bases of \(L^2({\mathbb{R}})\). Trans. Am. Math. Soc. 315, 69–87 (1989)

Meyer, Y.: Wavelets and Operators. Cambridge Studies in Advanced Mathematics 37, Cambridge (1992)

Mhaskar, H.N., Micchelli, C.A.: Approximation by superposition of sigmoidal and radial basis functions. Adv. Appl. Math. 13, 350–373 (1992)

Mhaskar, H.N., Micchelli, C.A.: Degree of approximation by neural and translation networks with a single hidden layer. Adv. Appl. Math. 16, 151–183 (1995)

Mhaskar, H.N.: Neural networks for optimal approximation of smooth and analytic functions. Neural Comput. 8, 164–177 (1996)

Pinkus, A.: Approximation theory of the MLP model in neural networks. Acta Numer. 8, 143–195 (1999)

Unser, M.: Ten good reasons for using spline wavelets. Wavelets Appl. Signal Image Process. 3169(5), 422–431 (1997)

Xiehua, S.: On the degree of approximation by wavelet expansions. Approx. Theory Appl. 14(1), 81–90 (1998)

Acknowledgments

This work was supported, in part, by the GNAMPA and the GNFM of the Italian INdAM.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Costarelli, D., Spigler, R. Approximation by series of sigmoidal functions with applications to neural networks . Annali di Matematica 194, 289–306 (2015). https://doi.org/10.1007/s10231-013-0378-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10231-013-0378-y

Keywords

- Sigmoidal functions

- Neural networks approximation

- Order of approximation

- Truncation error

- Multiresolution approximation

- Wavelet-scaling functions