Abstract

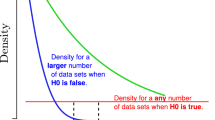

Research on bias in machine learning algorithms has generally been concerned with the impact of bias on predictive accuracy. We believe that there are other factors that should also play a role in the evaluation of bias. One such factor is the stability of the algorithm; in other words, the repeatability of the results. If we obtain two sets of data from the same phenomenon, with the same underlying probability distribution, then we would like our learning algorithm to induce approximately the same concepts from both sets of data. This paper introduces a method for quantifying stability, based on a measure of the agreement between concepts. We also discuss the relationships among stability, predictive accuracy, and bias.

Article PDF

Similar content being viewed by others

References

Carnap, R. (1947). Meaning and necessity: A study in semantics and modal logic, Chicago: University of Chicago Press.

Famili, A., & Turney, P. (1991). Intelligently helping the human planner in industrial process planning, Artificial Intelligence for Engineering Design, Analysis and Manufacturing, 5:109–124.

Fraser, D.A.S. (1976). Probability and statistics: Theory and applications, Massachusetts: Duxbury Press.

Haussler, D. (1988). Quantifying inductive bias: AI learning systems and Valiant's learning framework, Artificial Intelligence, 36:177–221.

Honavar, V. (1992). Inductive learning using generalized distance measures. Proceedings of the 1992 SPIE Conference on Adaptive and Learning Systems, Orlando, Florida.

Levenshtein, A. (1966). Binary codes capable of correcting deletions, insertions, and reversals, Soviet Physics, 10:703–710.

Murphy, P.M., & Pazzani, M.J. (1994). Exploring the decision forest: an empirical investigation of Occam's razor in decision tree induction, Journal for AI Research, 1:257–275.

Quinlan, J.R. (1992). C4.5: Programs for machine learning, California: Morgan Kaufmann.

Rendell, L. (1986). A general framework for induction and a study of selective induction, Machine Learning, 1:177–226.

Schaffer, C. (1992). An empirical technique for quantifying preferential bias in inductive concept learners. Unpublished manuscript. Department of Computer Science, CUNY/Hunter College, New York.

Schaffer, C. (1993). Overfitting avoidance as bias. Machine Learning, 10:153–178.

Utgoff, P.E. (1986). Shift of bias for inductive concept learning, in J.G. Carbonell, R.S. Michalski, and T.M. Mitchell (Eds.) Machine Learning: An Artificial Intelligence Approach, Volume II. California: Morgan Kaufmann.

Vapnik, V.N. (1982). Estimation of dependencies based on empirical data, New York: Springer.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Turney, P. Technical Note: Bias and the Quantification of Stability. Machine Learning 20, 23–33 (1995). https://doi.org/10.1023/A:1022682001417

Issue Date:

DOI: https://doi.org/10.1023/A:1022682001417